AI model evaluation is the process of testing how well artificial intelligence systems perform in real-world enterprise workflows, not just benchmarks. In agentic AI environments, this means validating how models interact with APIs, automation pipelines, and business logic to deliver reliable outputs at scale. Without end-to-end validation, most AI pilots fail to reach production or generate measurable ROI.

In 2026, the success of enterprise AI is no longer measured by the wow factor of a demo, but by the reliability of the AI agents running within your pipelines. While investment in generative AI continues to climb, a significant ROI gap has emerged. According to a16z’s 2026 enterprise survey, only about one-third of organizations have successfully transitioned their pilots into profitable production environments.

This stall is why Gartner predicts that 30% of generative AI projects will be abandoned by the end of 2026 due to poor data quality, escalating latency, and a failure to move beyond brittle prototypes. To bridge this gap, TPMS and vendor ops leaders must look beyond basic metrics and implement a holistic validation strategy that treats evaluation as the architect’s blueprint for the entire AI strategy.

To move an AI agent into production, evaluation must shift from static benchmarks to real-world validation of the entire system. This involves measuring how LLMS interact with APIS, the accuracy of retrieval-augmented generation (RAG) steps, and the reliability of multi-step automation workflows. By establishing a technical baseline that mirrors actual business objectives, ops leaders can build trust with stakeholders and ensure AI deployment delivers measurable business value.

Leading AI systems and machine learning teams ensure that validation begins at the roadmapping stage, moving beyond feature requests to an in-depth analysis of real-world workflows. When you skip this step, you risk deploying AI applications that fail to integrate with existing pipelines, leading to low AI adoption and project abandonment.

For example, if a team is architecting an agentic AI model to assist investors at a finance organization, the validation must go deeper than text quality. You must define how the agent interacts with legacy APIS and whether the outputs should be structured as a JSON object for a dashboard or a natural language summary. Evaluating for the end user also provides a clear baseline for data quality, free from the assumptions of those who won't be managing the deployed automation.

While the specific metrics depend on the use cases, ops leaders should use these questions to guide their validation strategy:

With the proliferation of powerful LLMS and computer vision models trained on internet-scale datasets, building a base model from scratch is rarely the most cost-effective path for enterprise AI developers. Choosing the right foundation models—those that already deliver outputs closest to your business objectives—can significantly reduce training data requirements and overall AI deployment timelines.

To find the right fit, you have to compare multiple AI models on metrics directly tied to their real-world use cases. While it is tempting to rely on high-level benchmarks like general accuracy, drilling down into specific task performance reveals the true TCO and the AI training journey ahead.

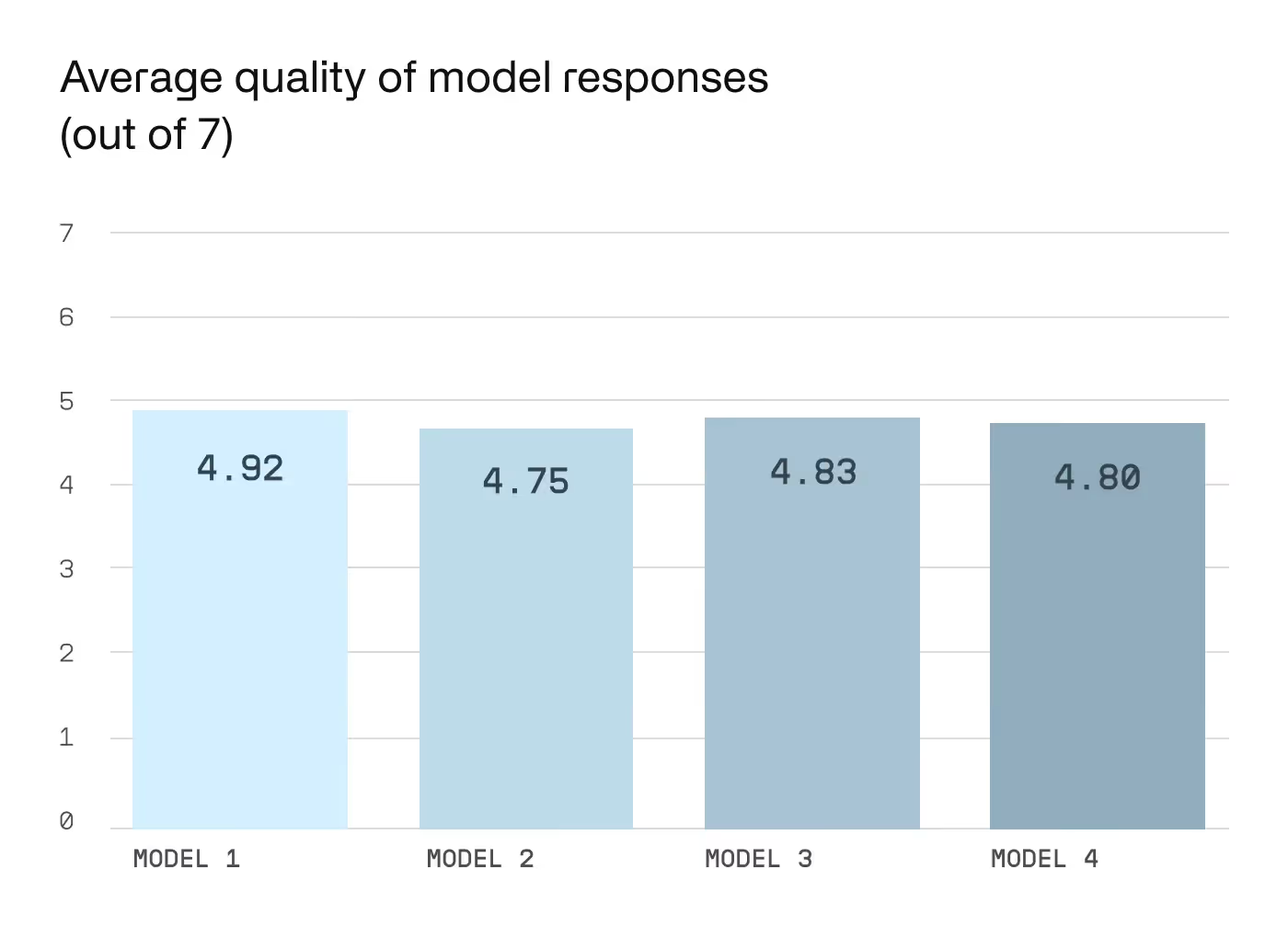

For instance, when evaluating LLMs for an investor assistant agentic AI, a general "average response quality" score is a poor baseline. These results are often subjective and fail to show how well a model handles the complex logic and APIS required for financial automation.

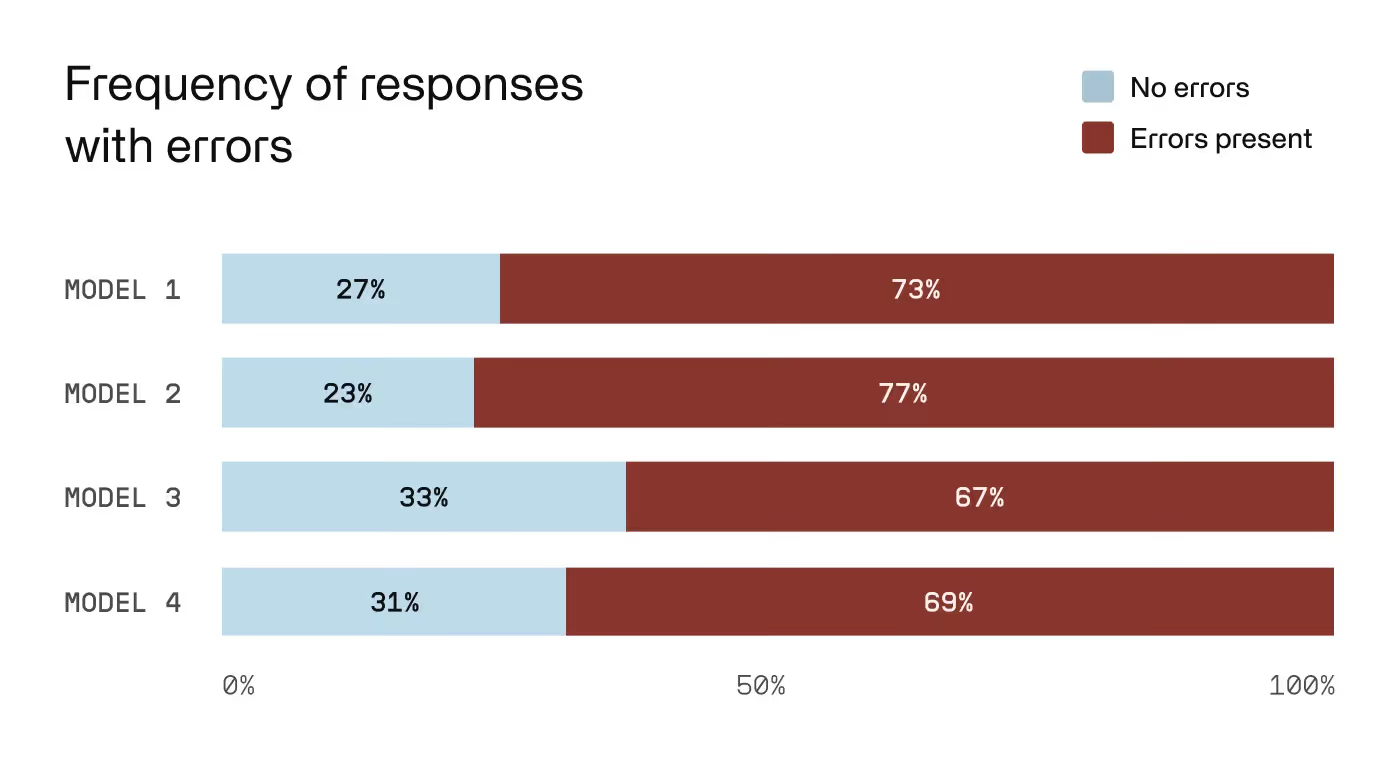

Assessing how frequently AI models return outputs with errors offers a clearer view of model performance. While a high-level summary might show one model as a slight frontrunner, digging into error frequency often tells a different story. In our investor assistant example, Model 2 might have a lower overall error rate, but if those errors involve hallucinations regarding sensitive data or compliance, it is a liability, not an asset.

To build trust with stakeholders, you must categorize these errors:

By identifying a pre-trained model that has fewer "prohibitively dangerous" errors (even if its total error count is higher), you can streamline the training process and reach production-level AI performance with 95% less data.

Once you have selected a base model and established a performance baseline, the next step is a deep dive into where the model fails to meet business objectives. This isn't just about finding errors; it’s about identifying the specific "failure modes" that inform your training data and fine-tuning strategy.

This stage requires "stress testing"—attempting to "break" the model or induce hallucinations within the context of your specific workflows. You must also validate AI models for safety to ensure you are protecting enterprise data, meeting compliance standards, and adhering to responsible AI policies.

For an investor assistant agentic AI, a technical evaluation roadmap includes:

By performing this surgical gap analysis, ops leaders can develop a precise AI strategy that targets only the necessary improvements. This prevents wasting budget on massive datasets that don't yield a direct business impact.

Implementing a technical validation strategy does more than just build trust with stakeholders. It fundamentally changes the economics of AI adoption. For an ops leader, the most compelling result of rigorous evaluation is the reduction in training data volume, making AI development faster and more cost-effective.

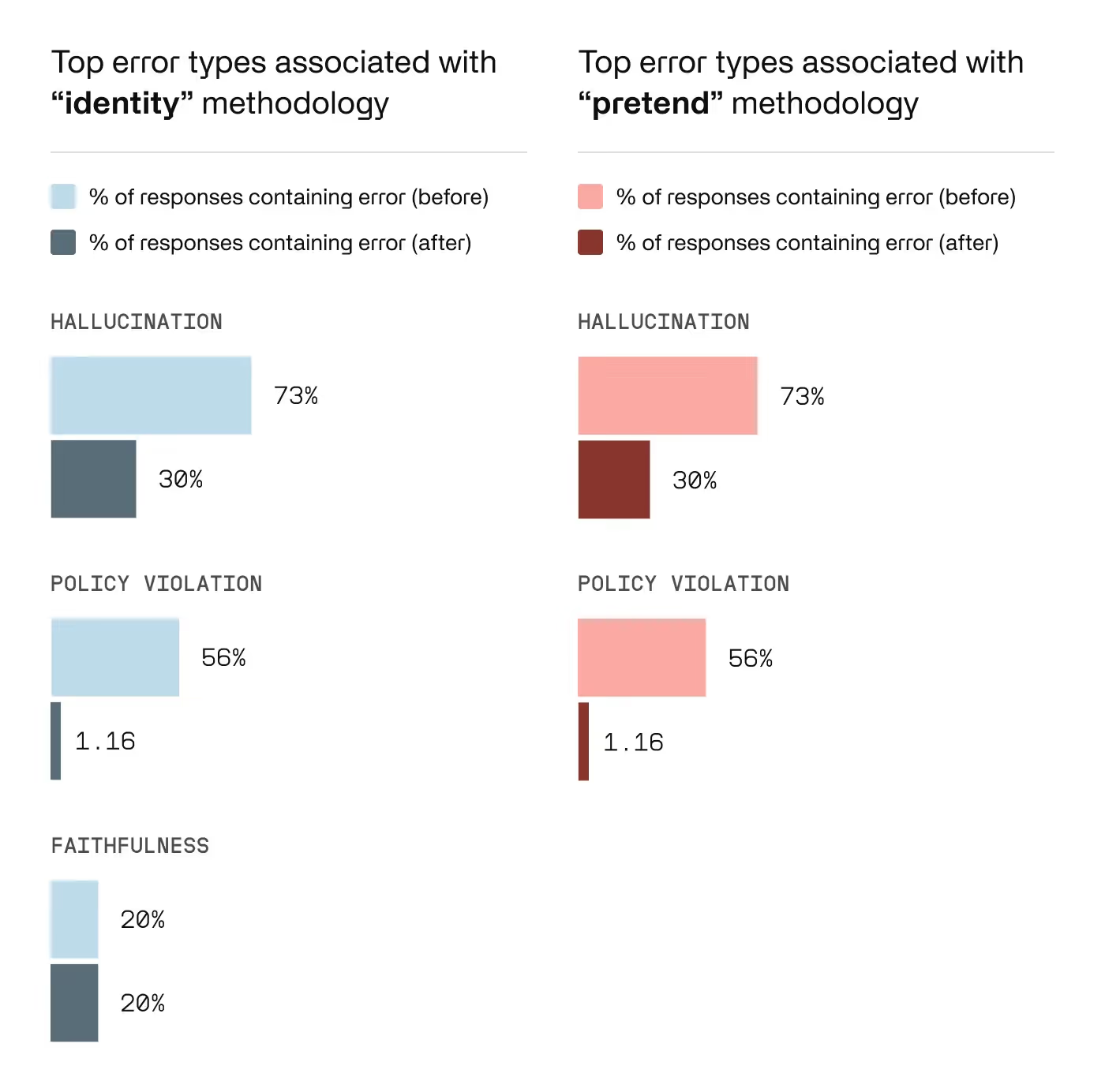

For example, one Invisible client aimed to eliminate a specific class of hallucinations where their LLM produced incorrect or harmful outputs. The initial hypothesis from their internal team was that it would require 100,000 rows of human training data to address the issue.

However, Invisible’s end-to-end validation methods revealed a specific "failure mode": the majority of harmful responses occurred only when the model was prompted to assume a specific professional identity or persona.

By identifying this pattern, the Invisible team curated a surgical dataset of only 4,000 rows that paired accurate answers with these specific "identity assumption" prompts. Once ingested via fine-tuning, the model’s frequency of policy-violating responses dropped by 97%. By focusing on the lifecycle of the error rather than the volume of data, the client achieved their business objectives with a 96% reduction in data costs.

Ready to move your AI initiatives from pilot to production? At Invisible, our forward-deployed engineers specialize in the technical overhead of AI deployment. We provide the high-quality benchmarks and continuous monitoring required to ensure your AI agents deliver measurable business value.

Is your AI strategy hitting a wall? Explore our AI training and evaluation services to see how we help enterprise AI leaders scale with precision.

Enterprise AI model evaluation is the process of testing how AI systems perform within real workflows, including API interactions, automation steps, and business-critical outputs—not just benchmark accuracy.

Most AI pilots fail due to poor data quality, lack of real-world validation, weak workflow integration, and an overreliance on static benchmarks instead of system-level evaluation.

Benchmark testing measures general model performance, while real-world validation evaluates how AI systems behave within actual enterprise environments, including latency, reliability, and integration with existing systems.

By identifying specific failure modes through gap analysis, organizations can target only the necessary improvements—often reducing training data needs by up to 95%.

Enterprises should evaluate API interactions, workflow reliability, hallucination rates, error types, security risks, and whether outputs align with real business objectives before deployment.