In 2026, the baseline for enterprise AI has shifted from simple chatbot interfaces to autonomous agentic AI. While foundation models like GPT-4 and Llama provide the "brain," they lack the domain-specific precision required to execute complex workflows.

Recent data from Gartner suggests that 30% of GenAI projects will be abandoned by the end of 2026, largely due to poor data quality and lack of specialized optimization. To bridge this gap, enterprises are moving beyond prompt engineering and into advanced fine-tuning llms to create reliable, goal-oriented agents.

In an agentic AI context, a fine-tuned model does more than talk—it reasons. While a base model can summarize a meeting, a model fine-tuned on enterprise data can:

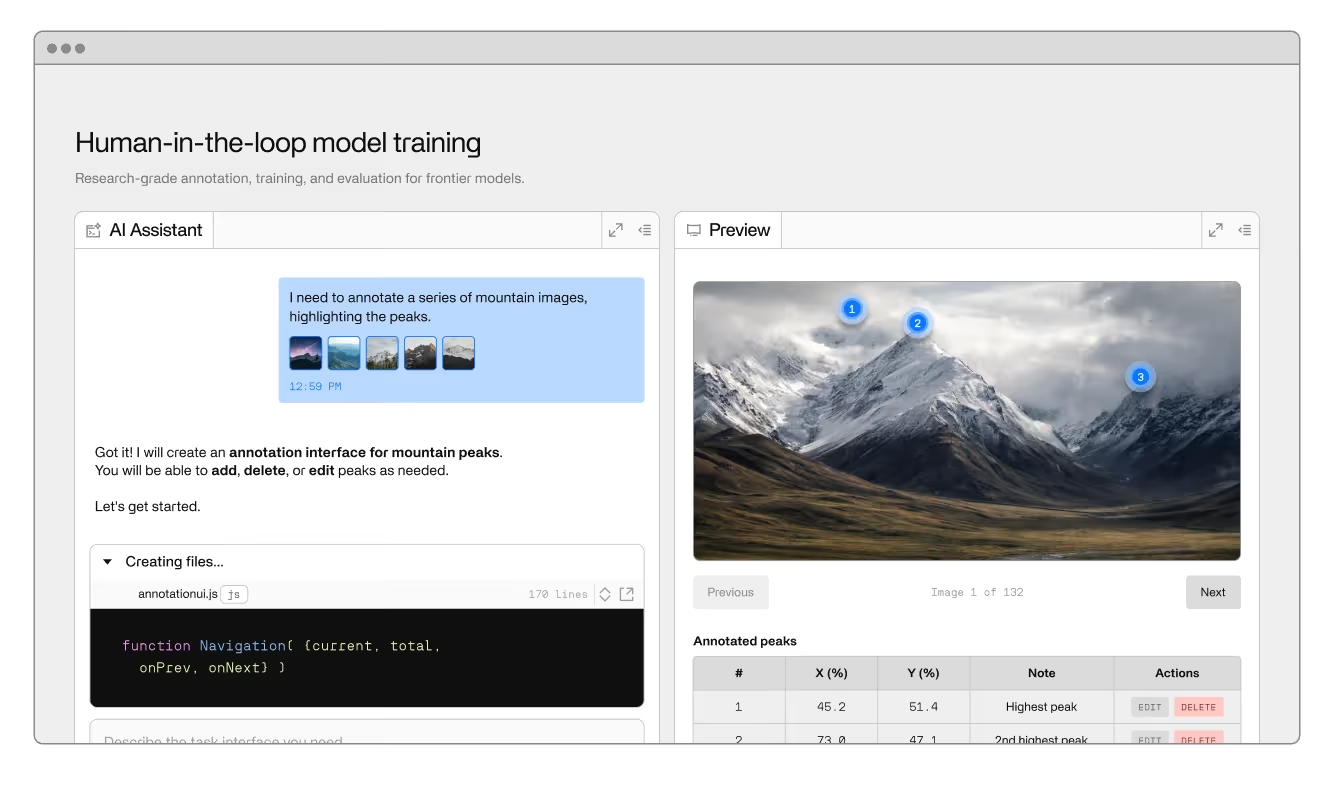

At Invisible, our forward-deployed engineers use the Axon platform to orchestrate these agents. By focusing on high-quality human-in-the-loop training data, we transform large language models into specialized operational infrastructure.

"Should we use RAG or fine-tuning?" remains the most-searched question in artificial intelligence. In 2026, the answer is rarely "either/or"—it’s about how they complement each other.

Strategic Note: Most successful AI solutions today use a hybrid approach. RAG provides the new data, while fine-tuning ensures the model knows exactly how to process it.

Updating a model for real-world enterprise use requires a rigorous fine-tuning process.

The success of your fine-tuning ai models depends entirely on the curated quality of your dataset. You must move past "raw data" and create "instruction-ready" data.

Training large models from scratch is rarely feasible. LoRA and other low-rank adaptation techniques allow you to update a model's hyperparameters with minimal computational resources. This makes optimization faster and allows for rapid iterate cycles.

Enterprises cannot risk leaking sensitive data into public foundation models. Fine-tuning open source models (like Llama) within your own VPC provides the security of generative ai without the privacy trade-offs.

The ultimate goal of fine-tuning llms is to improve metrics that matter to the business: latency, accuracy, and automation depth.

Building agentic AI that actually works requires a blend of machine learning expertise and process engineering. Fine-tune your model with Invisible.