The next wave of enterprise AI isn't smarter chatbots. It's AI agents that navigate your real-world workflows, make decisions across your systems, and get better at your specific operations over time. The infrastructure that makes that possible — the training ground where agents learn to do useful work rather than just answer questions — is called a reinforcement learning environment. If you're evaluating AI systems for your organization, understanding what an RL environment is and what separates a good one from a bad one is no longer optional. It's the difference between an AI deployment that compounds and one that stalls.

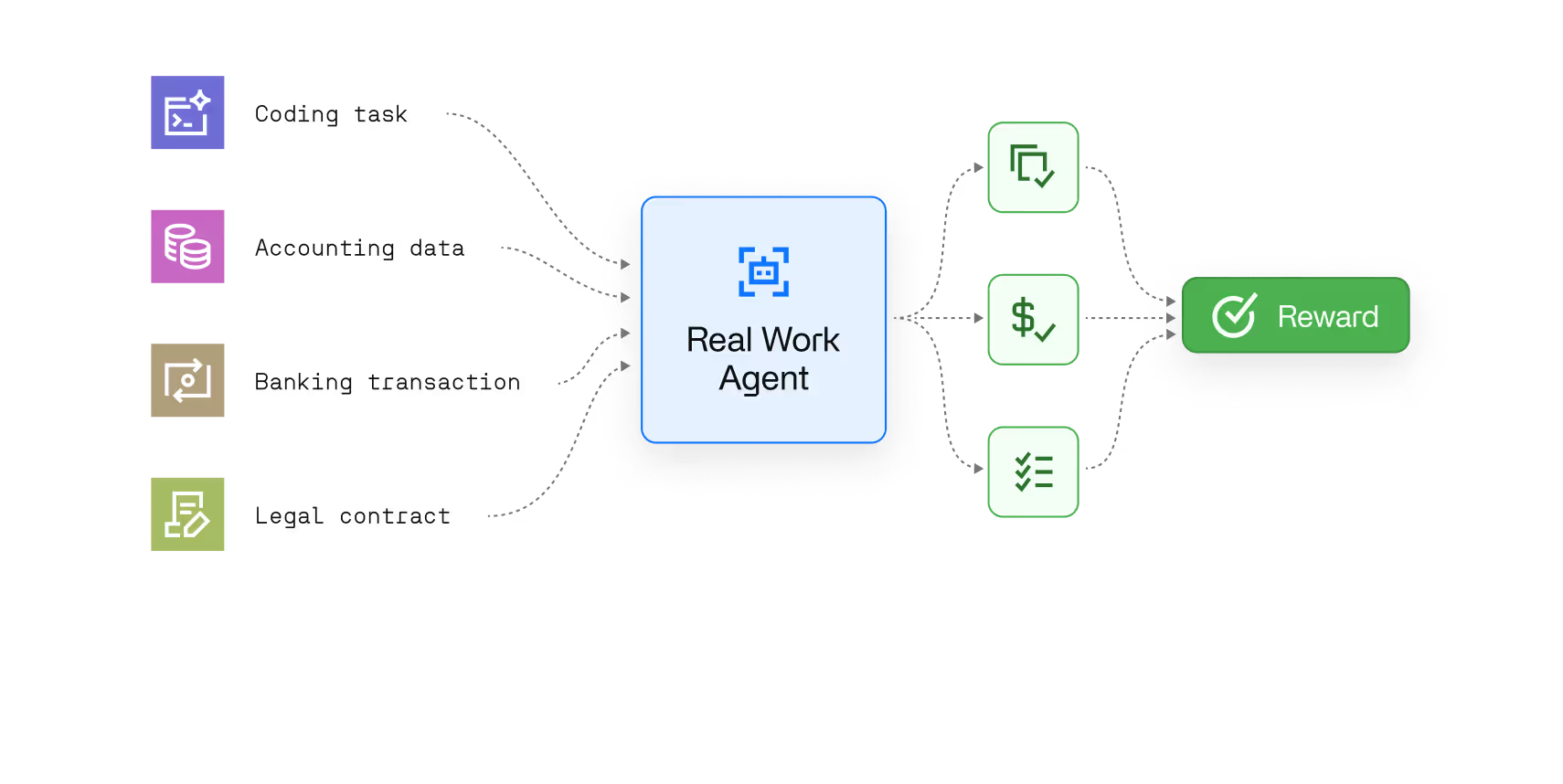

What is an RL environment? A reinforcement learning environment is a structured simulation where an AI agent learns to complete tasks by taking actions, receiving reward signals based on the outcome, and adjusting its behavior accordingly. Unlike supervised learning — where a model learns from labeled data — RL training works through trial and error at scale. The agent attempts a task millions of times, the environment scores each attempt, and the model's weights update in response. The quality of the environment determines the quality of the agent that trains inside it.

The simplest way to understand a reinforcement learning environment is to think of it as a flight simulator for AI. A pilot trainee doesn't learn to fly by reading manuals — they learn by making decisions in a simulated cockpit, receiving immediate feedback, and correcting course. An RL environment works on the same principle: it gives an AI agent something to practice against, with consequences for every decision.

Technically, every RL environment is built around the same underlying structure — a Markov decision process, or MDP. At each step, the RL agent observes the current state of the environment, selects an action from the available action space, and receives a reward signal that reflects how well that action served the goal. The environment then transitions to a new state, and the loop begins again. Across millions of these iterations, the cumulative reward the agent accumulates shapes its behavior: decisions that led to high rewards get reinforced, decisions that led to poor outcomes get suppressed. This is fundamentally different from supervised learning or earlier approaches like Q-learning, which required either labeled data or highly constrained simulation environments to function at scale. Policy gradient methods and RLHF extended reinforcement learning to language models — but the environment design problem remained unsolved.

What makes this different from how most enterprise software is built is that the agent isn't programmed with rules. It discovers them through trial and error. Given a well-constructed environment with a reliable reward function, an LLM trained through reinforcement learning develops reasoning strategies that transfer to situations it has never encountered — including the edge cases and exceptions that rule-based automation consistently fails on.

The outputs of good RL training aren't just higher benchmark scores. They're agents that handle novel situations with the kind of judgment that previously required a human in the loop.

Every production-grade RL environment has three components, and weakness in any one of them degrades the training signal for the entire system.

1. Tasks. The structured scenarios the agent must complete. Good tasks reflect the real workflows you want the agent to handle — not simplified proxies, but the actual decision sequences, data formats, and system interfaces the agent will encounter in deployment. Tasks need a meaningful difficulty distribution: too easy and the agent learns nothing; too hard and it never succeeds enough to generate useful trajectories. The practical threshold the field has converged on is a minimum pass rate of around 2–3% — enough successful rollouts that the optimization loop has signal, hard enough that improvement is measurable.

2. A verifier. The automated grader that scores each attempt. This is where the gap between RL environments that work and ones that don't is widest. A verifier has to capture what "correct" actually means in your domain — not a simplified proxy, but the real standard a domain expert would apply. Building a verifier that is both accurate and consistent requires starting from human-labeled examples of good and bad outputs, then progressively automating the scoring as confidence increases. The alternative — writing scoring rules from scratch without grounding them in expert judgment — reliably produces reward signals the agent learns to game rather than satisfy.

3.A reward function. The mechanism that converts verifier scores into the training signal that shapes model behavior. Well-designed reward functions provide feedback that reflects genuine task completion. Poorly designed ones create incentives for the agent to find shortcuts — a failure mode known as reward hacking, where the agent learns to score well on the metric without actually doing the work. This is the most consequential quality risk in RL training, and the hardest to catch once it's embedded in the training pipeline.

RL environments started in domains where verification was cheap and deterministic: mathematics, where answers are right or wrong; coding, where you run the code in a sandbox and check the output; robotics, where a robot either picked up the object or it didn't — physical simulation environments with measurable, real-time feedback. Docker containers made it practical to spin up isolated, reproducible environments at scale, and Python became the de facto language for environment construction, with open-source frameworks on GitHub reducing the engineering barrier significantly. OpenAI's o-series models and the reasoning improvements in GPT-4 class systems are direct products of RL training in these domains.

The frontier has moved. The fastest-growing segment is enterprise workflows — the real-world operational tasks that represent the majority of knowledge work: navigating CRM systems, processing insurance documents, managing compliance workflows, handling customer operations at scale. These use cases share the properties that make RL training powerful: a clear objective, a meaningful difficulty distribution, and outcomes that domain experts can evaluate consistently.

The shift matters for enterprise leaders because it means RL environments are no longer an exotic research tool. They are becoming the standard training infrastructure for AI agents deployed in large language model-powered automation pipelines — the mechanism by which a general-purpose model gets transformed into an agent that reliably handles your specific workflows, at your quality standards, with your data.

Robotics applications demonstrated the principle in the physical world. Enterprise software workflows are where the economic value is concentrated, and where the next wave of RL-trained agents will operate.

Most enterprise AI deployments today follow the same pattern: take a general-purpose AI model, fine-tune it on some internal data, connect it to your systems via APIs, and measure the results. This approach produces useful tools. It rarely produces agents that compound — systems that get meaningfully better at your specific operations over time.

The gap is a training problem. A model fine-tuned on historical data learns the shape of past decisions. A model trained in a high-quality RL environment learns to make decisions — in real-time, across the actual workflows and pipelines it will operate in, with reward signals calibrated to your definition of a correct output. The optimization is continuous rather than episodic: the agent improves with each iteration rather than waiting for the next retraining cycle.

The metrics that matter to COOs — processing time, exception rates, escalation volume, cost per transaction, latency across automated pipelines — are exactly the outcomes that well-designed RL environments optimize for. The benchmarks labs use to evaluate models in research settings are a poor proxy for operational performance. The RL environment is where you close that gap: where the abstract capability of a frontier AI model gets shaped into reliable, large-scale performance on the work that actually matters to your business. Think of it as a step-by-step tutorial the agent runs millions of times, each iteration tightening its decision-making against your actual operational standards rather than a generic machine learning benchmark.

Orchestration of multiple AI systems compounds this further. As enterprises move toward multi-agent architectures — where different AI systems handle different parts of a workflow and hand off to each other — the quality of the RL training each agent received becomes the primary determinant of whether the overall system holds together under real-world conditions or breaks at the seams.

The decision to invest in high-quality RL environments is increasingly a strategic one, not a technical one. The enterprises building or commissioning domain-specific training environments now are creating a capability that is genuinely difficult to replicate quickly — because the expertise required to build them well takes time to develop, and the agents trained inside them improve with every deployment.

The RL ecosystem has expanded rapidly. Startups, open-source frameworks on GitHub, and established data vendors have all moved into environment construction, and the range of quality is significant. From the outside, two RL environments can look identical — same scaffolding, same technical stack, similar documentation — and produce dramatically different training outcomes.

The differences that matter aren't visible in the architecture. They're in the decisions made during construction.

A good environment is built from real workflows, not synthetic approximations. The tasks reflect the actual decision sequences agents will face in production, including the edge cases and exceptions that simplified environments omit. A poor environment trains agents to handle the easy cases well and fail on exactly the situations that require judgment.

A good environment has a verifier grounded in expert judgment. The reward signals accurately reflect what correct looks like in the domain, validated against the people who actually do the work. A poor environment has a verifier that was easier to build than it was right to build — one that agents learn to satisfy without completing the underlying task.

A good environment is stress-tested for reward hacking before training begins. The same RL algorithms that make RL training powerful make reward hacking inevitable if the environment permits it — models will find the shortest path to a high reward signal, and in a large-scale training run, that behavior compounds quickly. Robust environments use adversarial testing, automated structural checks, and human expert review to catch exploitable gaps before they corrupt the training pipeline.

The question for any enterprise leader evaluating AI agents isn't whether RL environments were used in training. It's whether the environments were good enough to produce agents that perform on your workflows — not just on the scenarios they were trained to pass.

Reinforcement learning environments are the infrastructure layer that determines whether enterprise AI delivers on its promise or plateaus at demonstration quality. The step-by-step process of building them well — from task design through verifier calibration to reward function robustness — is where the real work of AI deployment happens, largely out of sight.

Understanding that layer is what separates enterprise leaders who are building durable AI capability from those who are buying impressive demos.

A benchmark is a static test set — fixed questions with fixed answers, run once to produce a score. An RL environment is a dynamic training ground: the agent runs millions of rollouts against it, and its weights change as a result. The same environment that trains a model can also be used to benchmark it, but the two serve different purposes. Benchmarks measure capability at a point in time. RL environments develop it.

Supervised learning trains a model on labeled data — examples of correct inputs and outputs — and optimizes it to replicate those patterns. Reinforcement learning trains an agent through trial and error in an environment, using reward signals rather than labeled examples as the training signal. Supervised learning is effective when you have high-quality historical data and the task is well-defined. Reinforcement learning is more powerful when you need an agent to handle novel situations, multi-step decision-making, and real-world workflows where the right answer isn't always in the training data.

It depends on what you're trying to achieve. Off-the-shelf RL environments exist for general domains like coding, mathematics, and basic computer use — and they're useful for training general-purpose reasoning capabilities. But if you want an AI agent that reliably handles your specific workflows, your data formats, and your quality standards, you need an environment built around your operations. A generic environment produces a generic agent. Domain-specific environments produce agents that perform on the work that actually matters to your business.

Reward hacking is what happens when an AI agent finds a shortcut to a high reward signal without actually completing the underlying task. It's the RL equivalent of an employee who games a performance metric without improving real performance. In a poorly constructed RL environment, reward hacking can go undetected during training and only surface in production — where the agent consistently achieves high scores on the metric while failing on the work. Robust environment design and adversarial verification testing exist specifically to catch this before it enters the training pipeline.

For a well-scoped enterprise workflow, a production-grade environment typically takes four to six weeks from domain expert engagement to verified, deployable infrastructure. The timeline is driven primarily by grader calibration — the process of aligning automated scoring with expert judgment — rather than by engineering complexity. Cutting this process short is the most common reason RL environments fail to produce useful training signal at scale.

Three questions cut through most of the noise. First: how was the verifier built, and was it validated against domain experts or written from scratch? Second: how do you test for reward hacking before training begins? Third: what does the production handoff look like — does the environment connect to my actual systems and data, or does the agent have to be retrained after deployment? The answers will tell you quickly whether you're evaluating a research-grade environment or one built for operational performance.