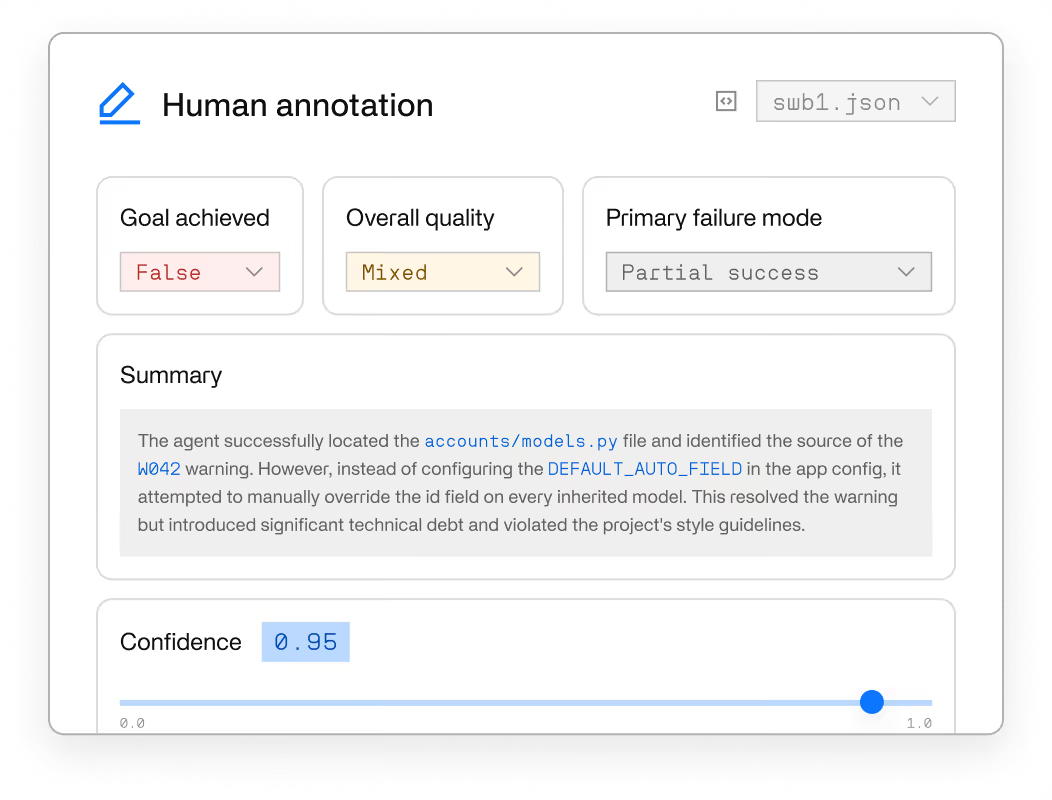

Reward design is too consequential to automate. Our experts define task logic, design reward rubrics, and annotate trajectories.

Train agents around your goals through realistic tasks that reflect real-world tools, data, uncertainty, and decision-making.

Flexible integration and secure deployment options designed to fit your stack.

Train, test, and trust agentic AI on real work.

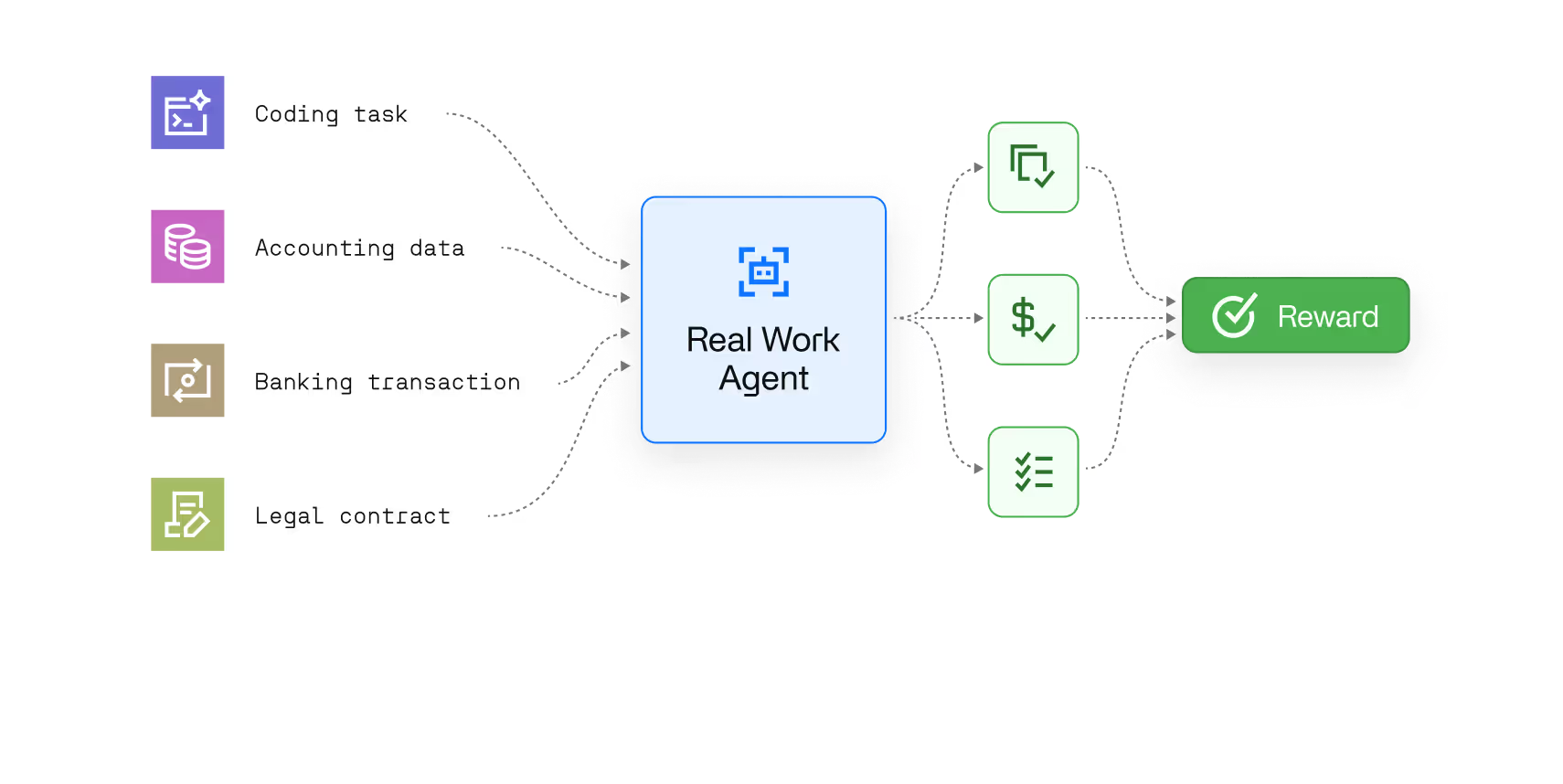

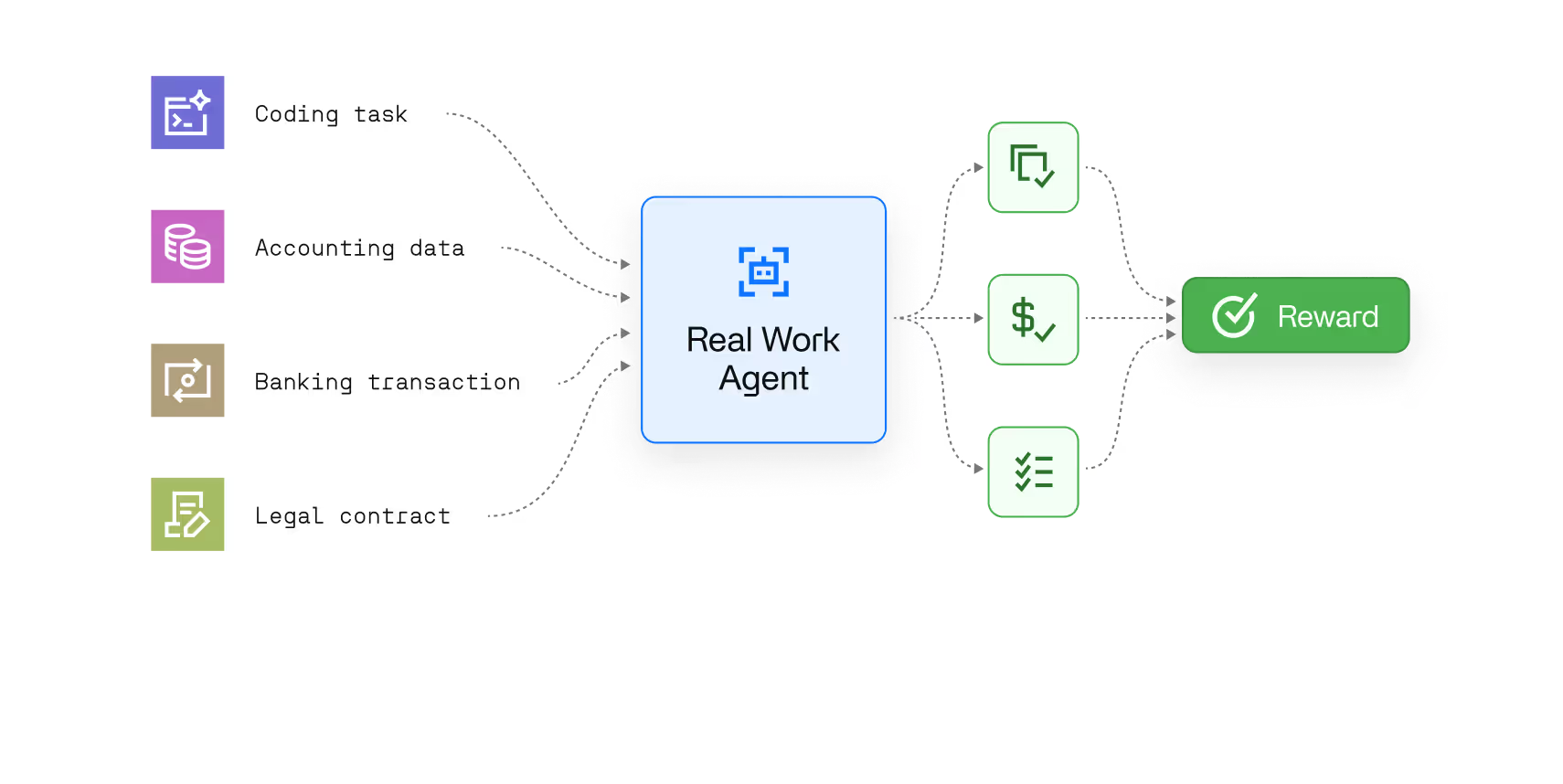

Tasks are drawn from work that creates real value like coding, accounting, banking, legal and compliance. Agents learn to complete the work, not just pass the test.

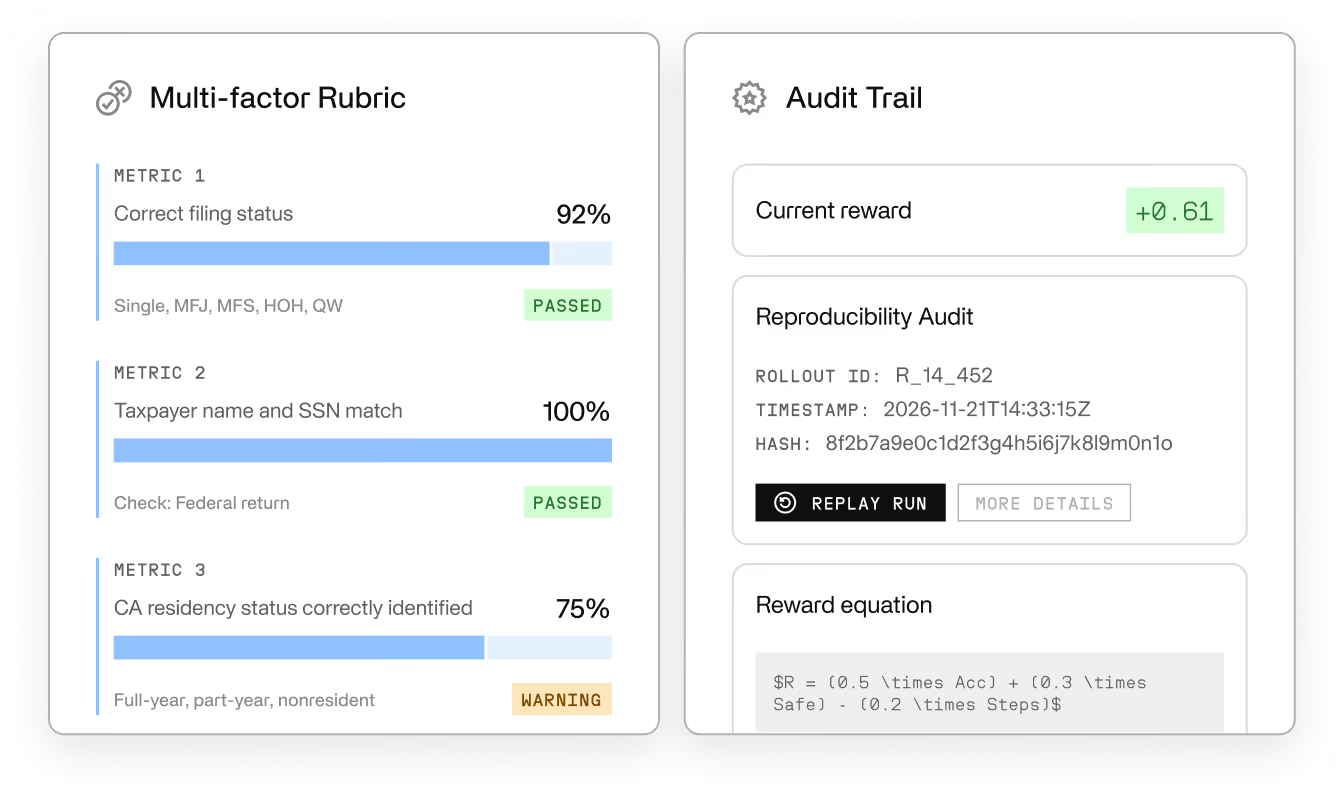

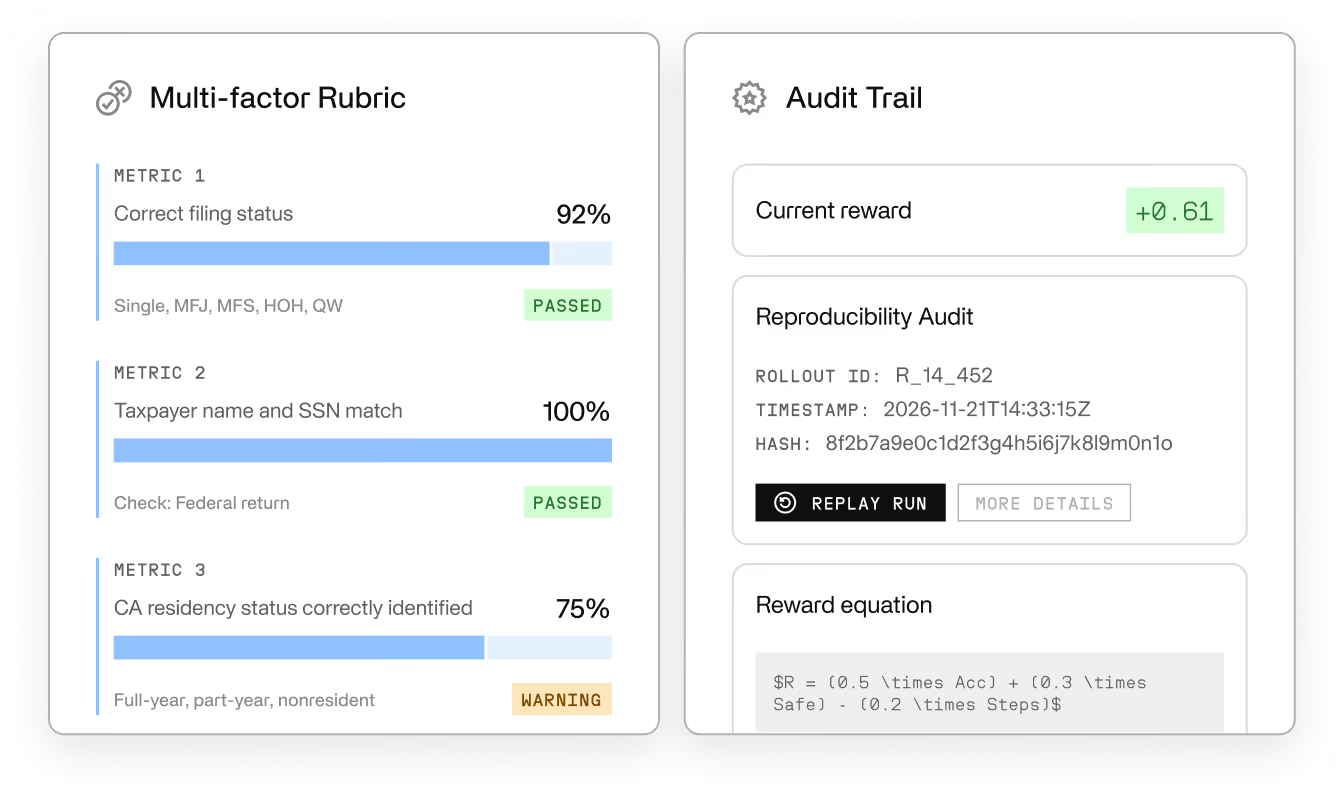

Measure success with built-in rewards, rubrics, and automated checks. Every outcome is auditable, reproducible, and verifiable.

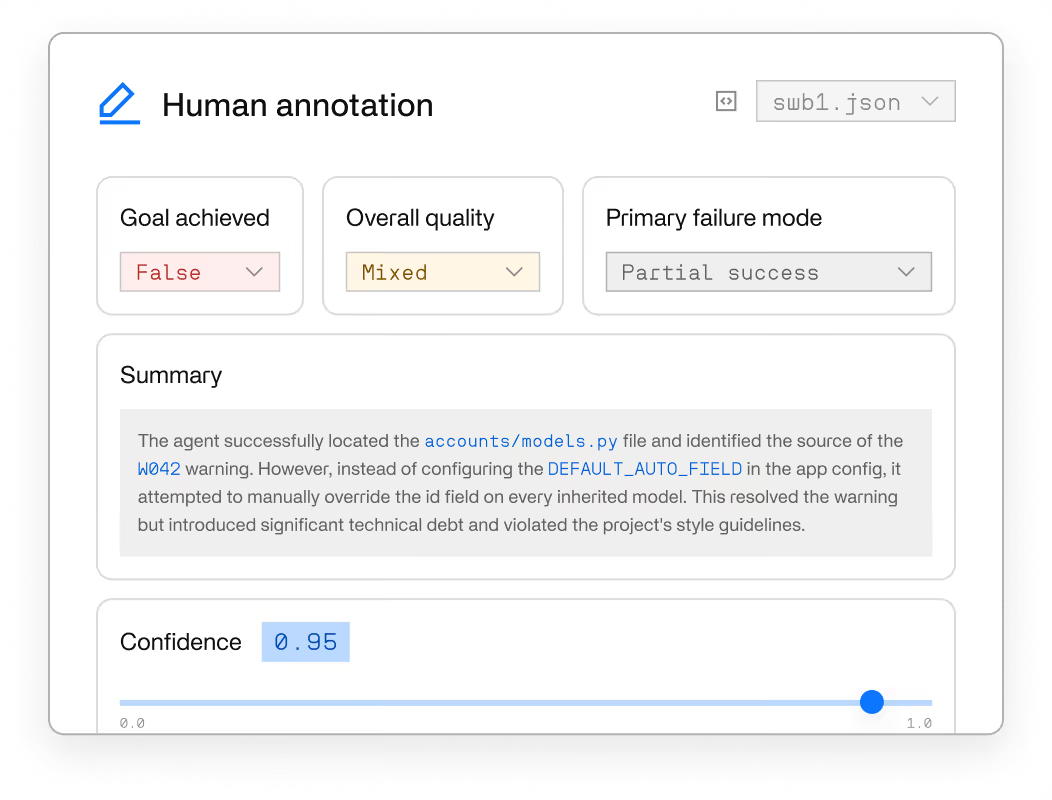

The most complex tasks need a reference. Human annotations define what good looks like and teach models the moves reward signals alone can’t.

Every run is logged and replayable. Debug failures, compare model versions, and show stakeholders exactly what the agent did and why.

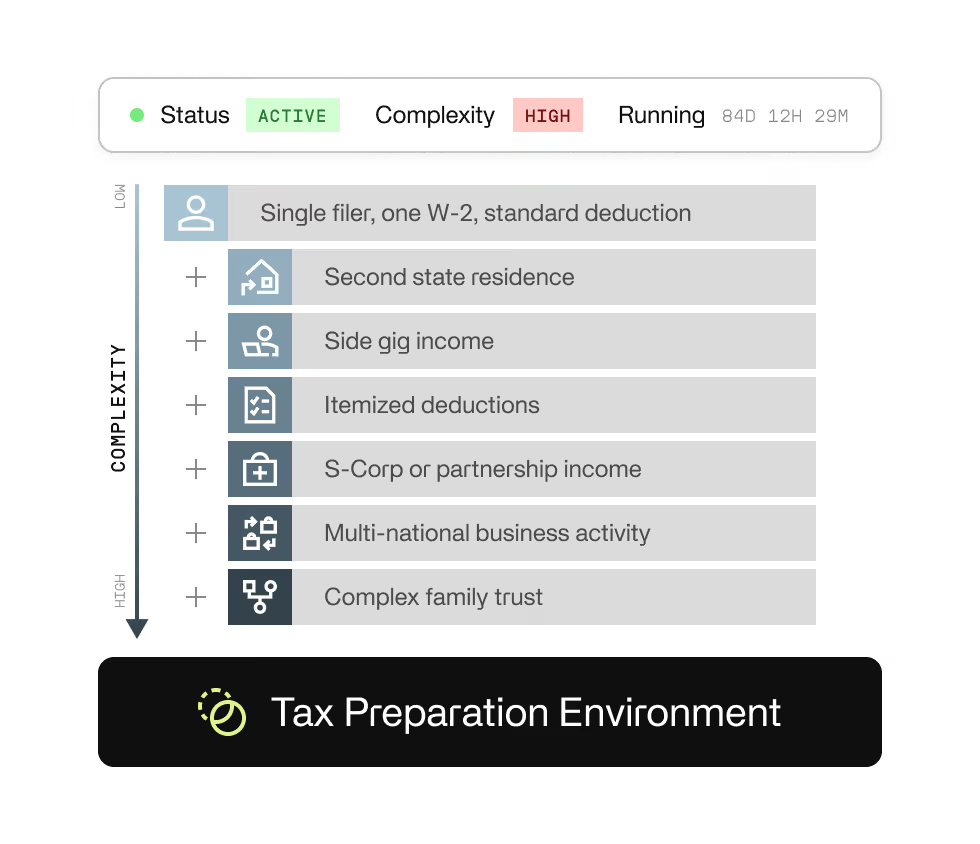

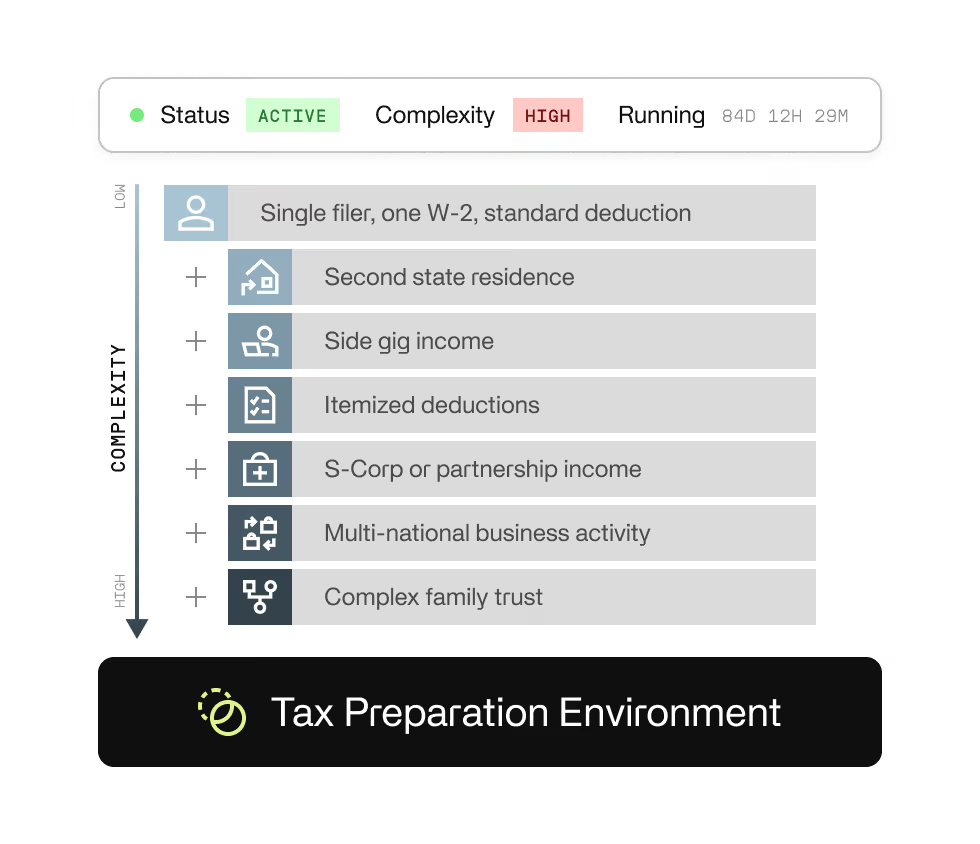

Start with simple tasks, layer in constraints, and increase complexity over time within the same environment. No rebuild required as capabilities improve.

Tasks are drawn from work that creates real value like coding, accounting, banking, legal and compliance. Agents learn to complete the work, not just pass the test.

Measure success with built-in rewards, rubrics, and automated checks. Every outcome is auditable, reproducible, and verifiable.

The most complex tasks need a reference. Human annotations define what good looks like and teach models the moves reward signals alone can’t.

Every run is logged and replayable. Debug failures, compare model versions, and show stakeholders exactly what the agent did and why.

Start with simple tasks, layer in constraints, and increase complexity over time within the same environment. No rebuild required as capabilities improve.