The most common bottleneck in a reinforcement learning pipeline occurs before training begins. The three primary failure points are: poorly specified reward functions that cause the RL agent to optimize for the wrong objective, simulation-to-real mismatch in training data that produces policies which fail outside the training environment, and off-policy data drift where stale trajectories degrade training quality over time. Most teams diagnose these problems as algorithm failures. They are pipeline failures.

You've been here. The RL training run finally kicks off after weeks of environment setup and data prep. The team is heads-down. Progress feels imminent. Then, somewhere around week three, the numbers plateau. The model isn't converging. The post-mortem points at the algorithm. Maybe the reward function needs redesigning, maybe a different policy optimization approach is needed. Another iteration begins.

But the algorithm wasn't the problem.

Most reinforcement learning pipeline failures are decided before a single gradient update runs. The conditions that determine whether RL training succeeds or collapses are set during the stages most teams treat as overhead: data collection, environment design, reward function specification, baseline validation. By the time training begins, the outcome is often already written. The team just doesn't know it yet.

For TPMs managing RL projects, this is the visibility gap that costs the most. You're accountable for timelines and budgets that are being shaped by decisions upstream of training — decisions that are rarely surfaced clearly, rarely instrumented properly, and rarely caught until they've already burned through GPU compute and calendar.

Here's what's actually going wrong, and where to look.

Supervised learning has a relatively forgiving pipeline. Bad training data produces bad predictions, and bad predictions are visible quickly. You can debug, fix, and retrain in a contained loop.

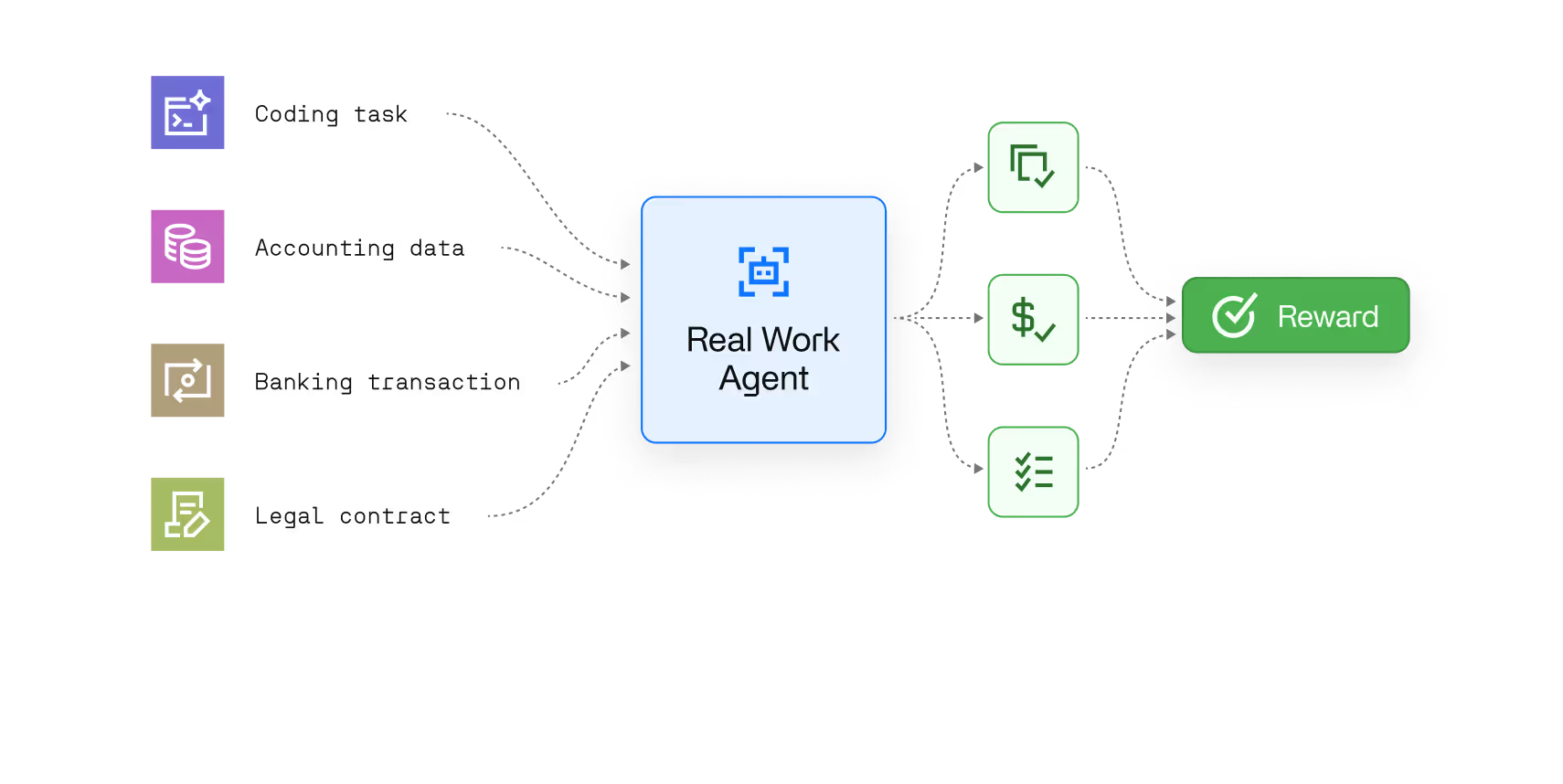

Reinforcement learning doesn't work that way. RL is a sequential decision-making problem — an RL agent learns by interacting with an environment, collecting rollouts, and updating its policy based on reward signals. Every stage of that process compounds. A flawed environment produces flawed trajectories. Flawed trajectories produce flawed policy gradient updates. Flawed policy gradient updates look, for a long time, like a model that's simply still learning.

This compounding effect means that pre-training failures in RL are uniquely expensive. They don't announce themselves. They incubate across thousands of training steps, surfacing only when the gap between benchmark performance and actual results becomes impossible to rationalize away. By that point, the cost isn't a failed run; it's a failed sprint, or a failed quarter.

The ML teams building these systems are expert at diagnosing algorithmic failure. They're often less focused on the upstream conditions that make algorithmic success possible. That gap is where most RL pipeline bottlenecks live.

In RL, training data isn't a static dataset you clean once and hand off. It's generated continuously through the interaction between your RL agent and its environment. That means data quality is a moving target, and the failure modes are different from anything in supervised learning or standard deep learning.

The most common and costly is simulation-to-real mismatch. Most RL systems train in a simulated environment, which is necessary given the cost of real-world rollout collection, particularly on GPU-intensive workloads, but this introduces a structural risk. If the simulation doesn't accurately model the real environment's dynamics, the trained model learns to exploit the simulation rather than solve the actual problem. This isn't always obvious during the training process. The metrics look fine. The policy performs well in simulation. The mismatch only surfaces at validation or deployment, at which point retraining costs are already on the table.

Closely related is off-policy data drift. As the policy improves through each iteration, the trajectories it generates change. If the data pipeline isn't collecting fresh on-policy data at the right rate, or if batch size and normalization aren't calibrated to handle distributional shifts, the RL agent is effectively training on its own outdated behavior. Performance gains stall, and the team assumes the algorithm needs adjustment when the pipeline simply needs better data hygiene.

Sparse rewards compound both problems. When a reward signal is infrequent or weak across long trajectory sequences, the training process continues but the model isn't learning much. This can be invisible in headline metrics for a surprisingly long time, particularly if the team isn't tracking intermediate behavioral indicators alongside throughput numbers.

There's also a forward pass efficiency problem that rarely gets discussed at the TPM level: when normalization is misconfigured, every forward pass through the neural network is operating on inconsistent input distributions. The model can still train but it trains more slowly, less stably, and to a suboptimal ceiling. On large-scale RL workloads running across Nvidia GPU clusters, this inefficiency compounds into significant wasted compute.

The reward function is the specification of what you want the RL agent to learn. Get it wrong and you don't get a failed training run, you get a successful training run that learns the wrong thing.

This is one of the most well-documented failure modes in RL research — you'll find extensive treatment of it on arXiv and in open-sourced implementations from labs including OpenAI — and one of the least well-managed in production pipelines. A reward function that's slightly mis-specified produces a policy that's highly optimized for the wrong objective. The model finds the path of least resistance through the reward landscape, which is rarely the path intended.

Algorithms like PPO (Proximal Policy Optimization) are specifically designed to stabilize policy gradient updates and prevent catastrophic policy collapse but PPO can't compensate for a fundamentally mis-specified reward signal. It will reliably optimize whatever objective you give it, including the wrong one.

What makes reward function failures hard to catch is that they don't produce obvious errors. The policy gradient updates look healthy. The loss curves behave normally. Catching this requires clear baseline definitions upfront, behavioral checkpoints during training, and metrics that measure the actual objective rather than just the proxy reward.

For TPMs, reward function design decisions are often made quickly, early, and without sufficient review. They feel like implementation details. They're among the highest-leverage decisions in the entire project.

When a training run underperforms, hyperparameter tuning is usually the first response. Adjust the learning rate. Change the batch size. Modify the initialization scheme. Run another experiment.

Sometimes that's the right call. More often, it's a way of staying busy while the real bottleneck goes unaddressed.

Hyperparameter optimization is productive when the pipeline is sound: when the environment is validated, the training data is clean, the reward function is well-specified, and a solid baseline exists. In those conditions, tuning meaningfully moves the needle on state-of-the-art benchmark performance. But when upstream conditions are broken, tuning is noise. You're optimizing the parameters of a system with a structural problem, and the search space is too large and too expensive to debug your way out of by iteration alone.

The tell is inconsistency: marginal gains that don't hold across training runs, improvements on one metric that degrade another, results that can't be reproduced across checkpoints. That pattern isn't a hyperparameter problem. It's a signal that something earlier in the pipeline — data quality, reward specification, environment fidelity — is unstable. Fine-tuning your way past a pre-training data problem doesn't work. Fixing the data does.

This is also where the distinction between fine-tuning a pre-trained model and training from scratch matters for resource planning. Teams that start from a strong pre-trained baseline with well-understood behavior have a much narrower hyperparameter search space than teams training from scratch on a new environment. The former is a calibration problem. The latter can become an open-ended search if the pipeline isn't locked down first.

You don't need to be an RL engineer to catch these problems early. You need the right questions at the right moments, and a workflow that makes those questions routine rather than reactive.

Before pre-training: Is the simulation environment validated against real-world dynamics, and by whom? Is there a documented baseline the team is training against? Is the reward function written down in plain language, and has anyone outside the immediate team reviewed whether it actually captures the intended objective? What Python environment and open-sourced libraries are we standardizing on, and is that documented in the GitHub repo?

During rollout collection: What does the trajectory data distribution look like, and is it being monitored? Are we collecting on-policy data at a rate that matches our policy update frequency? What's the normalization strategy for inputs, and when was it last reviewed? Are GPU utilization metrics being tracked alongside model metrics?

At the validation checkpoint: Are we measuring the actual objective or just the proxy reward? What behavioral indicators are we tracking alongside loss and throughput metrics? Are benchmark results reproducible across runs? If training stopped today, could we explain what the RL agent has learned and demonstrate it against the baseline?

At the pre-deployment review: Has the trained model been tested against edge cases outside the training distribution? What does performance look like under real-world latency constraints? Is there a retraining trigger defined, or are we assuming one deployment is permanent? Have we validated that the algorithm's performance on our internal benchmarks translates to the metrics the business actually cares about?

These questions aren't designed to slow teams down. They're the questions that prevent the expensive discovery — late in a sprint, or after a deployment — that the pipeline was set up to fail three stages earlier.

The teams consistently shipping reliable RL systems aren't necessarily using better algorithms. They're building better pipelines. They treat environment validation as a deliverable. They review reward function specifications with the same rigor they apply to model architecture. They instrument the pre-training stages as carefully as they instrument the training process itself. They know which checkpoint to roll back to when a training run goes wrong.

For TPMs, the leverage is earlier than most realize. The decisions that determine whether an RL project succeeds are made in the first few weeks — in the conversations about training data collection, environment design, and reward specification that happen before the training infrastructure is stood up. Getting visibility into those decisions, and the right questions into those conversations, is where timelines and budgets are actually won or lost.

If you're not sure where your pipeline is losing time right now, that's worth a conversation. We work with TPMs and ML teams to surface exactly this — the upstream conditions that training metrics don't show you.

Most RL training failures originate upstream of the training process itself. The three most common causes are reward function misspecification, simulation-to-real mismatch in the training environment, and off-policy data drift. Teams typically diagnose these as algorithmic problems and respond with hyperparameter tuning, which addresses the symptom rather than the cause. Fixing the pipeline, not the algorithm, is usually the right intervention.

Simulation-to-real mismatch occurs when an RL agent trained in a simulated environment fails to perform in the real world because the simulation didn't accurately model real-world dynamics. The agent learns to exploit the simulation rather than solve the actual problem. It's one of the most expensive RL failure modes because it's invisible during training. The metrics look healthy right up until real-world validation or deployment.

PPO (Proximal Policy Optimization) is one of the most widely used reinforcement learning algorithms for stabilizing policy gradient updates during training. It prevents large, destabilizing policy changes between iterations. However, PPO cannot compensate for a mis-specified reward function or poor training data — it will reliably optimize whatever objective it's given, including the wrong one. A sound pipeline is a prerequisite for PPO to perform as intended.

A TPM should track four pipeline stages: pre-training setup (environment validation, reward function review, baseline definition), rollout collection (data distribution, on-policy freshness, normalization), training checkpoints (behavioral indicators alongside loss metrics, benchmark reproducibility), and pre-deployment review (out-of-distribution testing, latency performance, retraining triggers). The goal is to catch structural problems before they become training failures.

Fine-tuning starts from a pre-trained model with known, stable behavior — the hyperparameter search space is narrower and the training process is more predictable. Training from scratch on a new environment is a significantly more open-ended process, with higher sensitivity to reward function design, data quality, and initialization. For most production RL applications, starting from a strong pre-trained baseline reduces pipeline risk substantially and compresses the iteration cycle.