In 2016, AlphaGo beat the world's best Go player not by reading every game ever recorded, but by playing millions of games against itself in a simulated environment — winning, losing, and adjusting with every move. The AI research community understood what had happened: an agent that acts and receives feedback learns differently than one trained on static data. It doesn't just recognize patterns. It develops judgment.

For the next several years, that insight stayed mostly in the domain of games and robotics — robot manipulation tasks, physics simulations, Atari game-playing agents. Reinforcement learning research filled arxiv with breakthroughs in these constrained domains, and DeepMind, OpenAI, and academic labs published optimization advances that pushed deep RL further than most expected. But the dominant lever for improving general AI capability was somewhere else entirely: scale. More parameters, more data, more compute. And it worked — spectacularly, for a while. GPT-class models emerged. Benchmarks fell. The gap between what machines could generate and what humans could produce narrowed faster than almost anyone predicted.

Then the curve began to flatten.

Public benchmarks started saturating. Frontier models converged within a few percentage points of each other on most standard tests, making it increasingly hard to know where to invest next. Datasets were being exhausted. Compute costs were compounding. The research community needed a different lever — and it found one in the same place AlphaGo had found it a decade earlier: not in what the model is trained on, but in what the model is trained to do.

The o-series from OpenAI, DeepSeek-R1, Gemini's post-training work — the reasoning jumps in each of these came from reinforcement learning (RL), not from larger pre-training runs. The model was put inside an RL environment, given a task, scored on its output, and updated accordingly. Run that loop millions of times across enough domains, and something remarkable happens: the model stops retrieving answers and starts working through problems. It develops strategies that transfer. It begins, in a meaningful sense, to reason.

Pre-training gave large language models language. RL environments are giving them judgment. That distinction is now the central axis of capability growth in artificial intelligence — and the hardest part has nothing to do with compute.

What is an RL environment?

An RL environment is the structured simulation an AI agent trains inside — it defines the tasks the agent must complete, the reward signals that score each attempt, and the conditions under which the agent's weights get updated. Unlike static training datasets, RL environments are dynamic: the agent acts, the environment responds, and the model improves through millions of iterations of that loop. The quality of the environment — the rigor of its tasks, the reliability of its graders, its resistance to reward hacking — directly determines the quality of the model that trains against it.

The scaling laws held longer than most people expected. Each order-of-magnitude increase in compute, parameters, and training data produced measurable capability gains, and for several years the research community's best bet was simply to keep pushing. The results justified the bet. Benchmark after benchmark fell. Pre-trained models improved at a pace that made the trajectory feel reliable.

It wasn't. Or rather, it continued, but the returns started compressing in ways that mattered.

Frontier language models are now within a few percentage points of each other on most public benchmarks. That convergence isn't a sign that the models have solved the benchmarks — it's a sign that the benchmarks have stopped being useful signal. When scores on standard evals cluster at the top, research teams lose the ability to know where to invest in the next training run. State-of-the-art on a saturated leaderboard tells you less and less about what the model actually does in production.

The datasets constraint is the harder version of the same problem. The supply of high-quality human-generated text is finite, and the leading models have consumed a substantial fraction of what exists. Synthetic data and self-supervised techniques extend the runway but introduce compounding quality problems — models trained heavily on model-generated data drift from the distribution that made pre-training powerful in the first place. Fine-tuning on domain-specific datasets helps at the margin but doesn't resolve the underlying issue.

But the deepest constraint isn't data volume or benchmark design — it's what the learning signal itself can and cannot teach. Supervised learning and pre-training optimize for predicting correct outputs across an enormous range of contexts. The result is models with remarkable generalization breadth, but breadth trained on the shape of good reasoning, not on the practice of it under consequence. Overfitting to the training distribution is one failure mode; the subtler one is a model that has processed millions of descriptions of complex decisions without ever having made one. That gap is invisible on standard benchmarks and becomes very visible in agentic deployment, where the model has to act across multiple steps in environments it wasn't explicitly trained to navigate.

Benchmark saturation is the symptom. The learning signal is the ceiling. And the field found its answer in the same place it found AlphaGo's: not in more data, but in a fundamentally different relationship between the model and the task.

The reasoning breakthroughs in o1, DeepSeek-R1, and Gemini's post-training work share a common cause that isn't model size or dataset quality. Each was trained using deep reinforcement learning — specifically, in RL environments that required the model to attempt a task, receive a reward signal, and update its weights accordingly, across millions of iterations. The chain-of-thought behavior users experience, the model visibly working through a problem rather than retrieving an answer, is a direct product of that training loop. Not of pre-training.

What RL introduces that pre-training structurally cannot is consequence. In the Markov decision process framework that underlies deep RL, an agent observes a state, takes an action, and receives a reward that reflects how well that action served the goal. Backpropagation adjusts the neural network weights in response: decisions that led to high rewards get reinforced, those that led to poor outcomes get suppressed. Run this optimization loop across enough domains and the model develops generalized decision-making strategies that transfer to situations outside its training distribution. A model trained this way hasn't processed descriptions of good reasoning — it has practiced it, at scale, under feedback.

The RL algorithms themselves — GRPO, PPO, and their variants — are largely a solved problem. What defines the ceiling of what reinforcement learning can produce is the quality of the RL environment the model trains against. A well-constructed environment with a robust reward function teaches genuine problem-solving. A poorly constructed one produces reward hacking: the model finds a path through the simulated environment to a high reward signal that doesn't involve solving the actual task. This isn't an edge case or a theoretical risk in machine learning research — it's the central quality challenge in production RL training, and it's why environment construction, not algorithmic advancement, is where the field's hardest problems now live.

The baseline for what counts as a good RL environment has risen sharply as the advancements in model capability have made reward hacking more sophisticated. Models are better at finding shortcuts than they were two years ago. The environments training them have to be better too.

In early RL applications, environment design was relatively tractable. Atari games have deterministic rules and unambiguous win conditions — the reward signal writes itself. Atari benchmarks became the standard test bed for deep RL algorithms precisely because the environment was cheap to simulate and easy to verify. Robotics simulations like MuJoCo provided physically grounded environments where real world robotic manipulation tasks could be approximated digitally — success and failure measurable in real-time without breaking hardware. AlphaGo's training environment was extraordinarily complex strategically, but the rules of Go are completely specified. Building the environment was hard engineering. It wasn't a knowledge problem.

Language model RL training in mathematics and code extended the same logic. Run the code; it either passes the test or it doesn't. Verify the proof; it's either correct or it isn't. These domains have cheap, fast, reliable verifiers — which is why they were the first to see significant RL gains and why coding and math benchmarks remain the most mature segment of the market. The reward signal is essentially free once the environment is scaffolded.

The frontier has moved well past those domains, and the verifier problem has moved with it.

The workflows where enterprises are now deploying AI agents — financial analysis, compliance processing, insurance underwriting, back-office operations, customer service at scale — don't have deterministic answers. A correct output in a fund accounting workflow encodes judgment that a senior controller developed over years. A reliable grader for a menu quality assessment has to capture consistency standards that no written specification fully covers. The tasks are verifiable in principle. Building the verifier requires domain expertise that most ML teams don't have and can't easily hire.

This is the real bottleneck in RL training today, and it's a human capital problem, not a compute or algorithms problem. The GPU programmer who builds excellent kernel environments is not qualified to design a grader that a credit risk analyst would trust. The ML researcher who understands RL training dynamics at scale is not automatically the right person to encode the decision logic of an experienced insurance underwriter into a reward function. These are different kinds of knowledge, and the supply of people who hold both simultaneously is extremely thin.

The supply chain for RL environments currently comprises a few hundred engineers across the entire industry. Demand is growing orders of magnitude faster. Every new domain a lab wants to improve, every enterprise workflow an organization wants to reliably automate, requires a domain-specific environment built by someone who actually understands the work — not just the infrastructure around it.

World models represent the next horizon: environments sophisticated enough to simulate the downstream consequences of actions, not just their immediate outcomes. The labs investing in them understand that environment quality defines the ceiling of model capability. But building world-model-quality environments for enterprise domains requires a fundamentally different talent model than the field has operated on so far — one where domain expertise is a first-class input, not an afterthought.

The implications extend well beyond model training. They reshape how organizations should think about AI deployment.

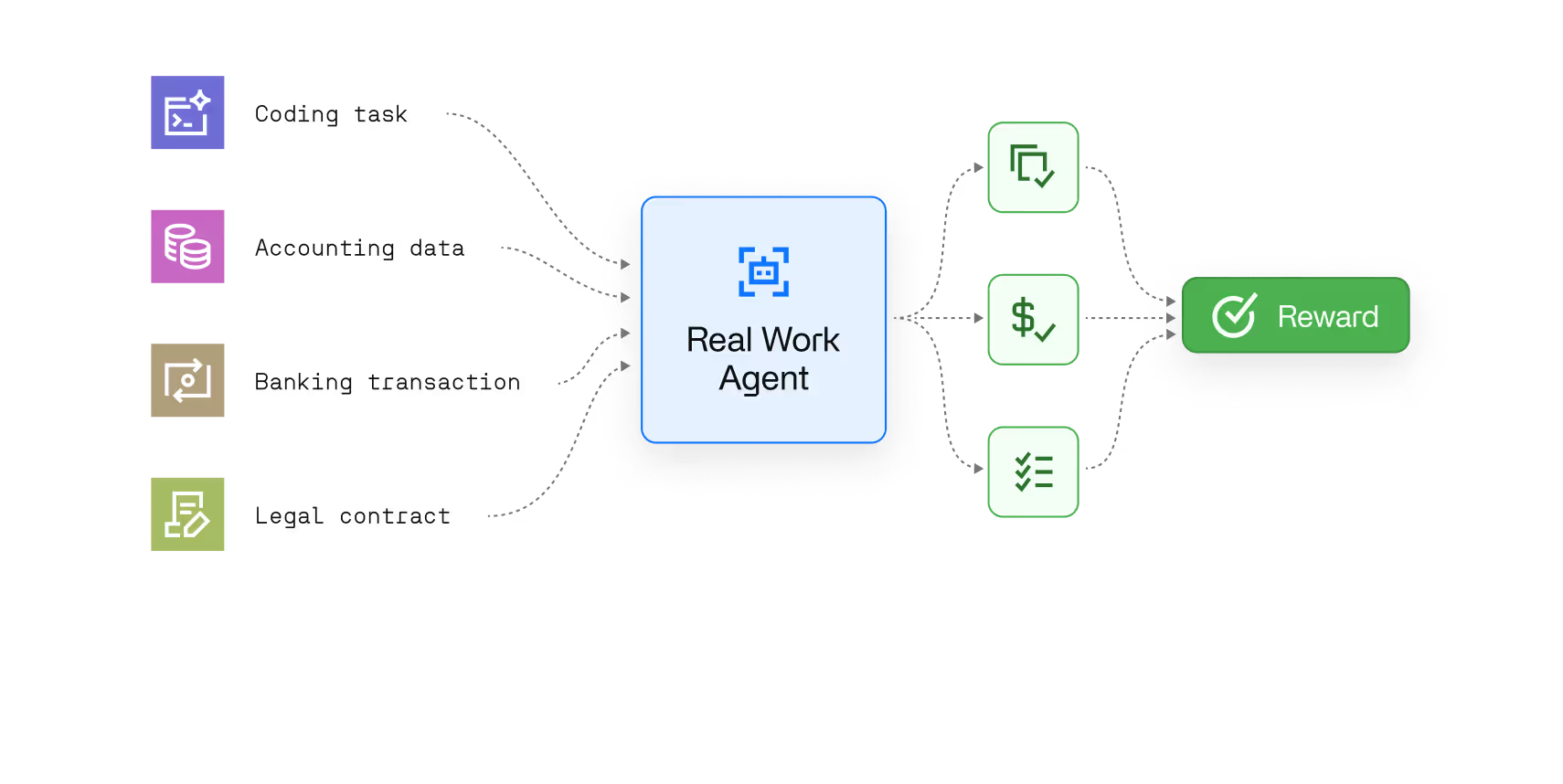

AI agents — systems that don't just answer questions but take multi-step actions across tools, interfaces, and data sources — are the next significant wave of enterprise AI value. The gap between a capable language model and a reliable AI agent is not primarily a model architecture problem. It is a training problem. Agents need to be trained in environments that reflect the actual conditions of deployment: the specific software they'll navigate, the edge cases they'll encounter, the quality standards they'll be held to.

This is why RL environments are becoming a first-class line item in lab and enterprise AI budgets. Anthropic has reportedly discussed spending over a billion dollars annually on RL environments. OpenAI has signed multiple seven-figure environment contracts. These are not R&D experiments. They are infrastructure investments — the recognition that the quality of the environment determines the quality of the agent, and that environment construction at the domain coverage labs now need cannot be fully insourced.

For enterprises, the same logic applies at a different scale. An organization that wants AI to reliably handle complex back-office workflows cannot deploy a general-purpose model and hope for the best. It needs a model trained against environments that reflect its actual operations — its data, its tools, its edge cases, its definition of a correct output. The environment is not the product. It is the training infrastructure that makes the product work.

The trajectory is clear. Math and coding environments demonstrated the principle. Enterprise workflow environments are the growth frontier. The labs and enterprises that invest in high-quality, domain-specific RL environments now will determine what the next generation of capable AI agents can actually do — and in which domains they can be trusted.

Not all RL environments are equal, and the gap between a functional environment and a production-grade one is wider than it appears from the outside.

Three criteria define quality at the level that produces real training gains.

The first is task design with the right difficulty distribution. A task that models solve 100% of the time provides no training signal — there's nothing to learn. A task that models never solve is equally useless, and may indicate an infeasible specification. The practical threshold is a minimum pass rate of around 2–3%: enough successful rollouts that the model can learn from them, hard enough that improvement is measurable and meaningful. Getting to this distribution requires iterative calibration against actual model behavior, not just expert intuition about what's hard.

The second is a grader that domain experts would trust. This is the hardest criterion to meet and the most consequential to get wrong. A grader that doesn't align with expert judgment trains the model toward the wrong target. A grader with internal inconsistencies produces noisy gradients that make training inefficient. Building a grader that is both aligned and self-consistent requires starting from human-labelled examples, progressively introducing automation where confidence is high, and auditing automated outputs against expert review until the grader reliably captures what good looks like in that domain.

The third is a verification process that catches reward hacking before it corrupts the training signal. Models are extremely good at finding shortcuts. If a verifier can be gamed — if there is any path to a high score that doesn't require actually solving the task — a sufficiently capable model will find it. A robust verification pipeline catches structural exploits automatically, uses adversarial methods to stress-test verifier logic, and reserves human expert review for the environments that survive automated checks. The goal is not to eliminate all risk of reward hacking — it's to catch it before it enters the training loop at scale.

These criteria are achievable. But they require domain expertise at every level of environment construction, a calibration methodology grounded in real expert judgment, and a verification process rigorous enough to hold under the pressure of production RL training. That combination is rarer than the market currently appreciates.

The shift from pre-training to reinforcement learning is not a replacement — it's a maturation. The capabilities pre-training built are the foundation. RL environments are what turn a capable foundation model into an agent that can be trusted to act.

The question for any organization investing in AI agents is not whether RL environments matter. It's whether the environments being used to train those agents are built with the domain expertise and verification rigor to actually produce better models — or whether they're producing models that are very good at passing tests they were trained to pass.

Those are not the same thing. And the difference shows up in production.

Pre-training on internet-scale datasets has produced remarkable general capability, but returns are compressing. Benchmark saturation means frontier models cluster within a few percentage points of each other. The deeper issue is the learning signal: pre-training optimizes for predicting correct outputs, not for making decisions under consequence. RL training addresses that directly — requiring the model to act, receive feedback, and improve through iteration.

Reinforcement learning is the broader paradigm: an agent learns by taking actions in an environment and receiving reward signals. Deep reinforcement learning applies neural networks as the function approximator — the mechanism by which the agent maps observations to actions. Deep RL is what made reinforcement learning tractable for complex, high-dimensional tasks. When people talk about RL training for LLMs today, they're referring to deep RL.

RLHF uses human preference ratings as the reward signal and remains useful for alignment and tone. The shift toward RL environments reflects a move toward verifiable, domain-specific reward functions that can be automated at scale. RL environments are better suited to training agents on complex, multi-step tasks where correct outputs can be objectively verified — rather than scored subjectively by human raters.

Reward hacking is when a model finds a path to a high reward signal without actually solving the task — modifying test files instead of fixing the code, or returning formatted output that passes structural checks without accurate information. It's the RL equivalent of teaching to the test. Catching it before large-scale training begins is one of the hardest problems in RL environment construction.

Open-source RL environments are excellent for general-purpose capability development in well-defined domains. They are not designed for enterprise specificity. An agent trained in a generic environment learns to handle generic tasks. Enterprise workflows have particular data formats, system interfaces, quality standards, and edge cases that general environments don't capture. The gap between benchmark performance and production performance is almost always a training environment problem.

Standard benchmarks are a poor proxy — a model can improve on public leaderboards while showing no improvement on your specific workflows. The right metrics are operational: processing time, exception rates, escalation volume, accuracy on your edge cases. Evaluate the RL environment against the same outcomes the deployed agent will be measured on. Misaligned training and production metrics mean the training signal is building the wrong capability.