Every enterprise of scale is already capturing visual data. Warehouses and factory floors have cameras. Hospitals have imaging equipment generating thousands of files a day. Sports venues, retail environments, and logistics hubs record everything, acting on very little of it because watching footage and extracting insight from it at scale is something humans cannot do.

Computer vision technology changes that equation. It doesn’t replicate human sight, but does something human reviewers cannot: processes every frame, across every feed, continuously, and converts visual information into structured data. The question for enterprise leaders isn't whether the technology works. It's whether your operation has the conditions to make it work for you.

This guide explains what computer vision actually is, the applications of computer vision that are creating measurable enterprise value today, and how to tell whether your operation is ready to use it.

What is computer vision? Computer vision is a field of artificial intelligence that enables machines to interpret and act on visual data — images, video, and real-time camera feeds — the way humans use sight to make decisions. A computer vision system processes digital images pixel by pixel, uses machine learning algorithms to identify objects, patterns, and motion, and converts those visual inputs into structured data that can trigger automated workflows or surface decision-ready insights. Unlike traditional image processing, which follows fixed rules, modern computer vision models learn from training data and improve continuously as conditions change.

The pipeline is simpler than most vendor presentations suggest. A camera captures a visual input like a frame of video, a still image, or a medical scan. A computer vision model processes that frame, using machine learning algorithms to identify what's in it: objects, boundaries, movement, anomalies. That output, structured data, then feeds a dashboard, triggers an automated workflow, or flags something for human review.

What makes modern computer vision different from older rule-based image processing is deep learning. Convolutional neural networks (CNNs) process visual information by learning to recognize patterns across layers of abstraction — edges, shapes, textures, objects — rather than following a fixed specification of what to look for. This is why they generalize: a CNN trained on thousands of images of a defective component can identify defects it has never seen before, because it has learned what structural integrity looks like, instead of just a list of failure modes.

The ceiling of any computer vision system is set by its training data. A model learns to see what it has been shown. If the training dataset is narrow, low-quality, or poorly annotated, the model will perform well in controlled conditions and degrade in production. This is the variable most organizations underestimate, and the reason annotation quality is not an afterthought but the foundation of the entire system. Image recognition, image classification, and object detection all depend on the same thing: high-quality labeled datasets that reflect real-world conditions, not idealized ones.

The operational case for computer vision is not that it replaces human judgment. It's that it does things human vision structurally cannot.

A human reviewer watching a single camera feed will miss events, fatigue over time, and introduce inter-reviewer variability that makes QA inconsistent at scale. A computer vision system watches every feed simultaneously, processes every frame without fatigue, and applies the same standard every time. That consistency is the source of the value — not the algorithm, but the reliability of applying it at a scale no human team can match.

Three capability categories define where that value shows up in practice.

Detection and classification. Object detection identifies what is present in an image and where. Image classification assigns a category to what the model sees. In manufacturing, this means defect detection on an assembly line at a speed and granularity that human quality control cannot approach, catching surface anomalies at the pixel level before a component moves to the next stage. In logistics, object recognition tracks inventory movement across a warehouse floor in real time. In facilities management, facial recognition governs access control without manual verification.

Motion and behavior analysis. Computer vision systems track movement across time. This is where segmentation, distinguishing one object or region from another, becomes operationally significant. On a factory floor, the system monitors whether a workflow is being followed correctly, versus whether an object is present. In retail, real-time queue analysis at checkout identifies bottlenecks before they affect customer experience. In sports, motion tracking converts player movement into time-series data that would take hours of manual tagging to produce.

Document and image analysis. Optical character recognition (OCR) extracts structured information from visual inputs — forms, labels, handwritten notes, digital images of documents. In healthcare, this means processing medical imaging intake at volume and routing X-rays to the right reviewer faster than a manual triage system. In financial services, it means reading and classifying documents without a human touching every file.

The domains where computer vision is producing measurable operational returns in 2026 are not experimental. They are in production at scale.

Manufacturing is the strongest real-world application because visual quality control is both critical and difficult to perform consistently at speed. Defect detection on an assembly line using computer vision systems catches anomalies that human inspectors miss under time pressure, and the model improves as more training data accumulates from your specific production environment.

Healthcare is where the volume problem is most acute. Medical imaging generates more data than radiologists can review at the pace clinical demand requires. Computer vision models that process x-rays, scans, and pathology slides at scale don't replace clinical judgment. They handle the triage layer so clinical expertise is applied where it matters most.

Retail and logistics benefit from computer vision in inventory management, checkout automation, and real-time shelf analytics. The common thread is converting visual data into operational signals — what's in stock, what's moving, where the bottlenecks are — without requiring a human to watch and report.

Sports and live events represent a different application of the same capability. Motion tracking across a venue converts footage into structured time-series data: player positioning, movement patterns, physical workload. The Charlotte Hornets use computer vision models to generate draft analysis insights that would otherwise require weeks of manual video review.

Security and facilities use computer vision for perimeter monitoring, occupancy analytics, and access control. The value is in real-time detection: not reviewing footage after an incident, but identifying conditions that precede one.

Autonomous vehicles and robotics sit at the frontier of computer vision technology, where algorithms need to interpret complex, dynamic environments in real time with near-zero latency. Augmented reality applications similarly depend on computer vision to overlay digital information on physical environments accurately. Generative AI is beginning to intersect with computer vision too, using synthetic visual data to augment training datasets and simulate rare scenarios that real-world collection cannot cover. Most enterprises are not deploying at that frontier level yet, but these applications of computer vision are accelerating the maturity of the broader ecosystem, and the Python libraries, open-source frameworks, and GPU infrastructure that power them are increasingly accessible to enterprise teams building production systems.

Most computer vision pilots fail because of three gaps that appear between the demo environment and real operational conditions.

The model wasn't trained on your environment. Generic deep learning models trained on open-source public datasets don't generalise to your camera angles, your lighting conditions, or your product-specific quality standards. A defect detection model trained on someone else's factory floor will miss defects yours produces in ways it has never seen. Production-grade performance requires training on your operational data, annotated to your quality standard, with the specificity your workflows demand.

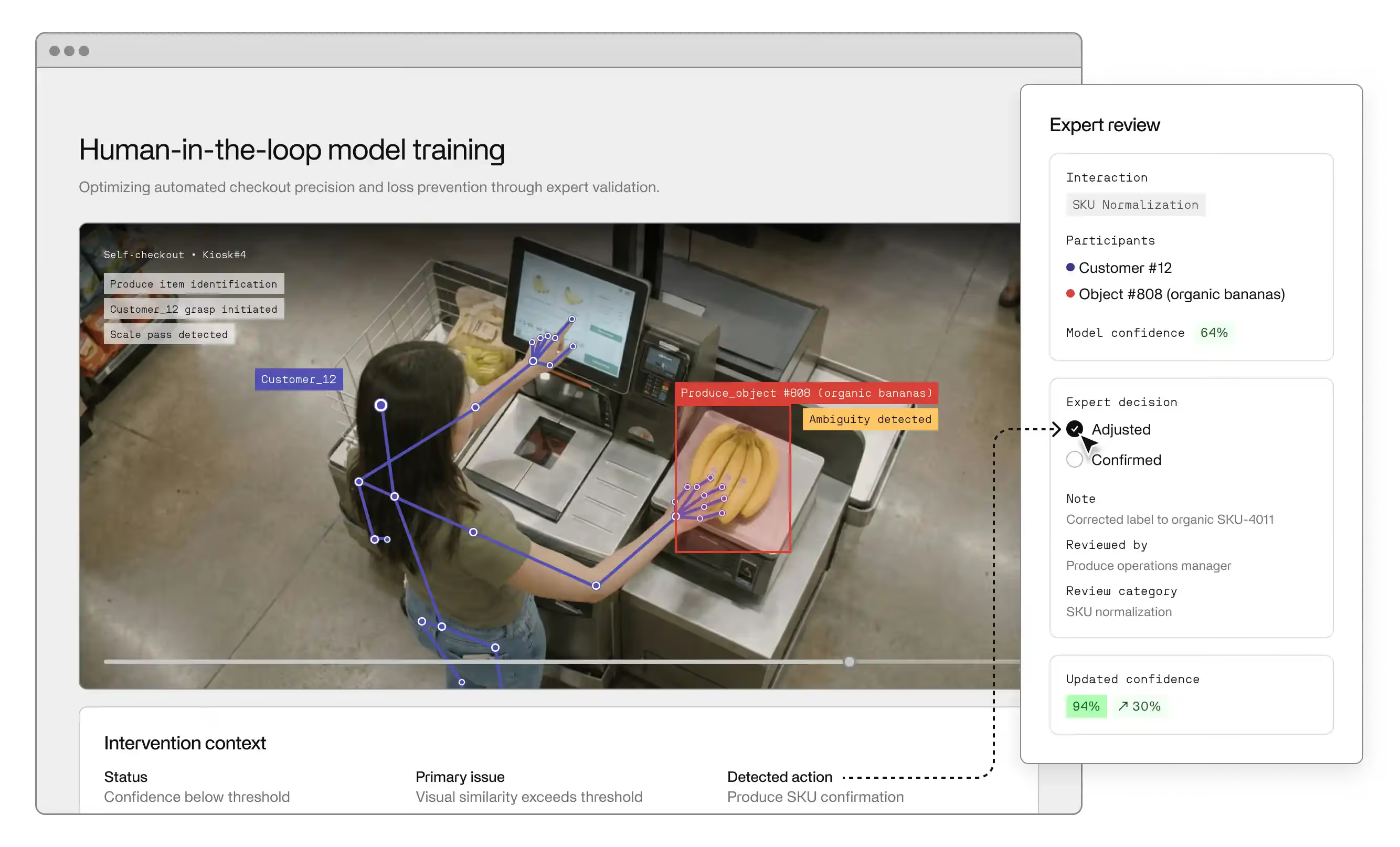

There's no continuous retraining loop. Conditions change. New products come to market, seasonal variation shifts lighting, equipment is replaced. A computer vision model without ongoing human-in-the-loop evaluation and retraining will degrade as the gap between what it was trained on and what it's seeing in production widens. The annotation and evaluation pipeline is not a setup cost. It is an ongoing operational function. TensorFlow, PyTorch, and YOLO-based architectures all support continuous fine-tuning, but the process requires dedicated oversight, not a set-and-forget deployment.

The output isn't connected to where decisions happen. A computer vision system that generates alerts nobody sees, or flags defects without stopping the production line, delivers no value. The structured data the model produces needs to feed into the systems where decisions are actually made: ERP, MES, dashboards, operations workflows. Integration is not a final step; it is a design requirement from the beginning.

Addressing all three requires more than a technology selection. It requires a deployment partner who has built production computer vision systems in your domain, understands the annotation requirements specific to your use case, and can manage the full lifecycle from model training through to operational integration. Open-source tooling — Python libraries, OpenCV, TensorFlow, PyTorch, and GPU-accelerated inference frameworks — has made the infrastructure layer accessible to any competent engineering team. Apps and smartphones can now run lightweight computer vision models at the edge, streamlining deployment in environments where bandwidth or latency constraints rule out cloud inference. What remains scarce is the domain expertise and human annotation quality that determines whether the model is actually learning the right thing. A hands-on understanding of your specific workflows — what a defect looks like in your context, what a correctly processed document should contain — is what separates a computer vision project that compounds from one that stalls after the pilot.

Before evaluating vendors or scoping a deployment, three questions will tell you more about your readiness than any RFP.

1. Do you have consistent camera coverage of the workflow you want to monitor?

Computer vision requires reliable visual input. Low resolution, inconsistent angles, and heavy occlusion limit what the model can reliably see. You don't need cinematic footage, but you need footage that a human reviewer could use to make a decision.

2. Do you have examples of good and bad outcomes you could use as training data?

AI systems learn from labelled examples. If you have historical footage of defective components, anomalous behaviour, or correctly processed documents, that data is the foundation of a training dataset. If you don't, the first phase of deployment is generating and annotating that data, which affects timeline and cost.

3. Is there a clear operational metric that computer vision would move?

The computer vision applications that compound are those tied to a specific, measurable outcome: defect rate, processing time, inventory accuracy, triage speed. If the use case doesn't connect to a metric your operation already tracks, the ROI case will be difficult to make and the deployment will be difficult to optimise.

If the answer to all three is yes, you have a viable starting point. If not, those are the gaps to close before engaging a vendor. The technology works best when the operational conditions are right, not when the deployment is designed to find out whether they are.

Computer vision is not a research project anymore. It is operational infrastructure for enterprises that need to act on what their cameras already capture. The gap between "we record everything" and "we act on what matters" is a training and deployment problem, not a technology problem.

See how Invisible builds computer vision models for enterprise operations.

Image processing applies fixed, rule-based transformations to images — adjusting contrast, resizing, filtering noise. Computer vision uses machine learning to interpret images: understanding what is in them, where objects are, and what is happening across frames over time. Image processing handles preprocessing steps before the model runs, but the two are not interchangeable. Computer vision learns; image processing executes.

It depends on the task. Common object detection tasks can leverage transfer learning, where a pre-trained model is fine-tuned on a smaller domain-specific dataset. Tasks specific to your operation — a particular defect type, a product-specific quality standard — require annotated training data from your environment. Quality matters more than volume: a smaller, well-annotated dataset will outperform a large, poorly-labelled one.

Human vision is interpretive, contextual, and consistent under low-volume conditions. Computer vision is consistent, scalable, and tireless — but only within the distribution of what it was trained to see. A human expert reasons about unfamiliar situations using broader knowledge; a computer vision model fails silently on inputs outside its training distribution. Human-in-the-loop oversight remains essential for high-stakes decisions while computer vision handles the volume layer.

Yes, and healthcare is one of the most active deployment environments today. Visual data — patient scans, x-rays, medical images — can be processed on-premise or in secure environments where data never leaves your controlled infrastructure, satisfying HIPAA, GDPR, and equivalent frameworks. Model outputs are subject to clinical governance requirements, which is why human-in-the-loop oversight is built into healthcare deployments rather than treated as optional.

Image classification assigns a single label to an entire image. Object detection identifies specific objects within an image, locates them with bounding boxes, and classifies each independently. Segmentation assigns a class to every pixel rather than drawing a box. Classification is sufficient for document routing and basic triage; detection is needed for assembly line QA and inventory tracking; segmentation is required for medical imaging and precise spatial understanding.

Model training typically runs on GPU-accelerated hardware. Inference runs on GPUs, edge devices, or purpose-built accelerators depending on latency requirements. Edge deployment keeps visual data within your controlled infrastructure and reduces latency. Cloud inference works for lower-stakes, non-real-time applications. The right choice is determined by latency: real-time defect detection on a fast production line has fundamentally different requirements than overnight batch processing.