Computer vision model degradation occurs when the accuracy of machine learning models declines after deployment due to a mismatch between training datasets and real-world conditions. This decay, known as model drift, results in unreliable outputs that can disrupt automated workflows. Whether the use case is quality control in a factory or document triage involving LLMs in a contact center, these failures increase manual intervention costs. Organizations can prevent this degradation by implementing continuous feedback loops, establishing automated retraining pipelines, and maintaining high-quality human-in-the-loop validation to ensure artificial intelligence models remain aligned with changing operational environments.

Your dashboard shows a healthy green light, yet your floor managers report an increase in missed defects or misclassified documents. This is the reality of silent failure in computer vision. Unlike traditional software that crashes when it breaks, an AI model fails by slowly losing its grip on reality while remaining 99% confident in its wrong answers. You likely deployed your system after seeing high benchmarks and performance baselines during the pilot phase, only to find that real-world performance has eroded within six months.

This article identifies the structural triggers and specific failure modes of that decay, providing a framework for building robustness into your production AI systems. For a broader understanding of how these systems are structured before they reach the production stage, consult our guide on what computer vision is and how it creates enterprise value.

A computer vision model is a prisoner of its initial training data.

If you used deep learning to train your algorithms in a perfectly lit lab, they will fail once they hit a warehouse floor at 2:00 PM with harsh sunlight coming through the skylights. The input sensors like your cameras, remain the same, but the visual environment changes. Dust on a lens, a shift in camera angle by three degrees during maintenance, or a change in the physical packaging of a product can render previously reliable machine learning models and their preprocessing steps useless.

When the pixels the model sees in production no longer match the pixel patterns it learned during model training, the system enters a state of covariate shift. It tries to force-fit new, unfamiliar data into old categories. This means the system you bought to save labor costs is now generating false positives that require expensive human review. The model hasn't changed, but the world has, and the model lacks the inherent flexibility to know it is looking at something new.

Maintaining model performance requires a recognition that training datasets are not permanent ground truth but snapshots of a specific moment in time that must be constantly refreshed.

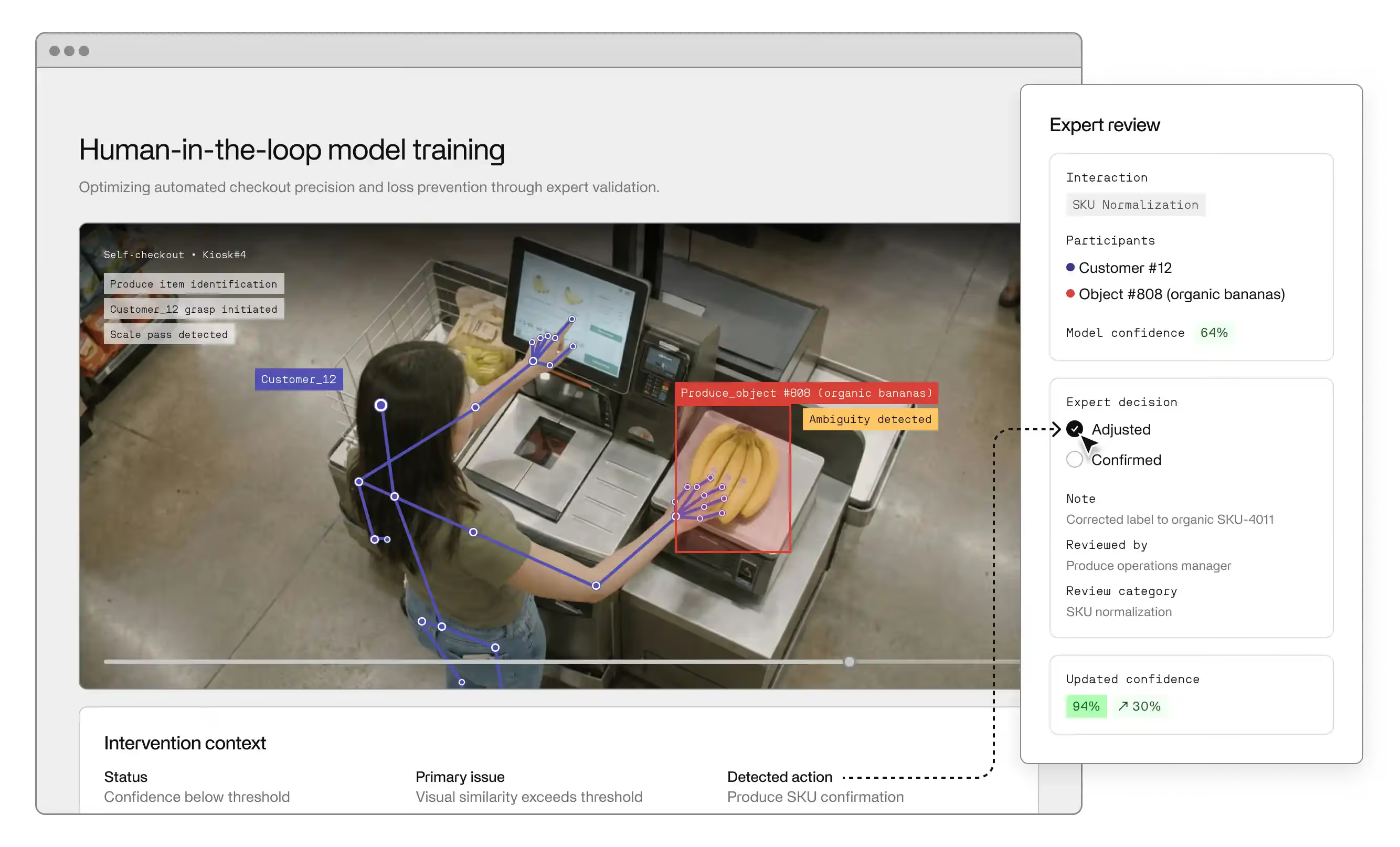

The only way to catch a model that is failing quietly is to keep humans in the flow of data. Feedback loops turn model outputs into a continuous source of new training data. When a human reviewer corrects a misclassification, that correction should not just fix the immediate error; it should feed back into the retraining pipeline to ensure the model learns from the mistake.

Without these loops, your AI system is a closed loop that slowly becomes an echo chamber of its own biases. Automated monitoring of metrics like confidence scores and latent space shifts can provide early warnings, but the drift occurs when your business rules outpace your algorithms

While data drift involves changes to the input, concept drift is a failure of the relationship between those inputs and your desired outputs. Your computer vision system may identify objects with 100% precision, but if the underlying business logic has shifted, those outputs become technically accurate but operationally useless. In a healthcare triage environment, a model trained to flag anomalies based on 2024 diagnostic protocols will effectively become a source of clinical risk by 2026 if those thresholds for high-risk categorization move. The model is not broken; it is simply executing an obsolete strategy.

This misalignment creates a widening gap in enterprise workflows that standard software monitoring rarely catches. Algorithms are literal and lack the departmental context to understand when a product line has been updated or when quality control tolerances have tightened. You must integrate your ML models into your primary change management process rather than treating them as isolated technical assets.

Failure to synchronize operational updates with model retraining ensures that your automation will eventually work against your current business goals.

The impressive benchmarks seen during vendor demos often result from models that have been overfitted to narrow, idealized datasets. Overfitting occurs when an algorithm memorizes the specific noise and artifacts of its training data rather than the universal patterns of the task. If a model is trained only on pristine images from a high-end sensor, its performance will collapse the moment it encounters the motion blur, varied focal lengths, or sensor grain of a real-time production environment.

Robustness in AI systems is measured by how gracefully a model handles out-of-distribution edge cases, not by how it performs on a static validation set. A brittle, overfitted model creates an unpredictable operational burden because it lacks the ability to generalize across the chaotic variability of a live contact center or factory floor. This is particularly critical in complex environments like healthcare or logistics where the cost of a false negative is high.

By integrating human-in-the-loop oversight into your standard workflows, you convert your operational staff from manual data processors into high-level supervisors of an improving AI asset.

Treating model maintenance as an ad-hoc troubleshooting project creates an operational bottleneck that scales poorly. If your engineering team or data scientists must manually intervene to gather new data and re-run model training on expensive GPUs every time accuracy dips, you are effectively running a manual process disguised as automation. High-performing enterprise operations replace this reactive cycle with automated pipelines that treat data ingestion, annotation, and validation as a background utility rather than an emergency repair.

This infrastructure allows your computer vision system to ingest new data from today’s edge cases and convert them into the training sets for tomorrow without a total architectural overhaul.

A mature pipeline transforms your AI from a static tool into a self-healing asset that adapts to the gradual shifts of a live environment. By standardizing the flow from production inference to human-in-the-loop validation and back into the model, you ensure that the cost of retraining remains a predictable, flat operating expense rather than an escalating technical debt.

The goal is to move beyond the break-fix mentality of traditional software support and toward a model of continuous optimization where your machine learning models incrementally improve their own benchmarks through every hour of operation.

Maintaining model performance in a dynamic enterprise environment requires shifting your perspective from a one-time deployment to a continuous operational lifecycle. While model degradation is an inevitable byproduct of environmental shift, it only becomes a critical failure when an organization lacks the pipelines and feedback loops necessary to capture and annotate new data. High-performing AI systems are not defined by the static benchmarks they achieve in a lab, but by the robustness of the processes that keep their outputs aligned with real-world conditions. To ensure your computer vision investment compounds in value rather than depreciating into technical debt, you must prioritize the human-in-the-loop infrastructure that detects silent failure before it impacts your bottom line.

If your current computer vision initiatives are struggling with accuracy or you are planning a high-stakes deployment, your strategy must include a dedicated system for continuous validation. See how Invisible builds and maintains production-grade computer vision models that adapt to your specific operational reality.

The primary reason is data drift, where the real-world environment changes in ways the model wasn't prepared for during its initial training. This can be as simple as changing lighting, new camera hardware, or variations in the physical appearance of objects. Because machine learning models are trained on specific datasets, they lack the context to understand that a change in shadows or angles shouldn't change the classification of an object. Without a system to identify these new conditions and feed them back into the model through retraining, accuracy will inevitably decline.

There is no universal schedule, as the frequency depends on the volatility of your operational environment. A contact center processing standardized digital forms may only need quarterly updates, while a manufacturing facility with changing product lines or a retail environment with seasonal shifts might require weekly or even daily retraining. The best approach is to monitor performance metrics in real-time. When the model’s confidence scores begin to trend downward or manual intervention rates climb, that is your signal that the retraining pipeline needs to be triggered.

While a larger, more diverse dataset helps with initial robustness, it cannot future-proof a model against the unknown. More data at the start can reduce overfitting, but it cannot account for concept drift—such as new safety regulations or product designs that don't exist yet. AI models are not set-and-forget tools. High-quality initial data gives you a better starting point, but the only way to prevent long-term degradation is through a continuous feedback loop that incorporates new data from the production environment as it arrives.

Beyond standard technical benchmarks like F1-score or Mean Average Precision, operations leaders should track the Cost of Manual Intervention (CMI) and the False Negative Rate in production. CMI measures how often a human must step in to correct or verify a model output; if this is rising, your model is degrading. Additionally, tracking the shift in the data distribution of your input can provide a leading indicator of drift before it impacts your accuracy. If the pixels coming in look significantly different from your training datasets, failure is imminent.

For most enterprises, the value is not in building the underlying infrastructure but in the quality of the data and the specific business logic applied to the workflows. Using established machine learning models and Python libraries as a base is standard, but the "secret sauce" is the human-in-the-loop layer that ensures the labels are accurate for your specific context. A deployment partner who manages the full end-to-end lifecycle—from data annotation to model monitoring—is often more effective than an in-house team trying to build every component of a custom MLOps stack from scratch.