This guide is for enterprise leaders evaluating Scale AI and its competitors for AI projects. It is also for AI teams who need more than a high-volume data labeling platform, including foundation model and LLM teams seeking support across edge cases, RLHF, and fine-tuning.

—

Enterprise and frontier AI teams are running into a familiar problem: the tools that got them to proof of concept weren't designed to get them to production.

Data labeling platforms were built to solve a specific, well-defined problem — producing high-quality training data and annotated datasets at scale. For a certain stage of AI development, that's exactly what was needed. But as AI teams move from building models to deploying them in real-world environments like healthcare and computer vision, the requirements change significantly.

Teams need domain-specific expertise that generic annotation workforces can't provide. They need workflows that connect training data to live systems, not just benchmarks. They need end-to-end partners who stay involved when edge cases surface in production, not providers whose job ends when the dataset is delivered.

Scale AI is the most recognized name in data labeling, and for high-volume annotation work it has a strong track record. But as AI teams' programs mature, "data labeling at scale" and "production-ready AI" are increasingly different problems, and more teams are discovering that gap firsthand.

That's why the market for Scale AI alternatives has grown considerably. Organizations aren't looking for a cheaper version of what Scale AI does. They're looking for fundamentally different support.

In this guide, we compare Scale AI against its main competitors, what each category of provider is actually built for, and where Invisible is a better fit for AI teams operating beyond the annotation stage.

Scale AI is a U.S.-based artificial intelligence (AI) company, now owned by Meta, that helps machine learning (ML) teams prepare scalable training data and evaluate ML models. Also, it combines automated annotation and labeling tools with human review to produce labeled and structured datasets for AI applications.

Scale AI offers the following capabilities:

Scale AI is strong fit for companies that need large volumes of high‑quality annotated data for general purpose tasks. It works well when teams want a managed labeling platform with human review and tooling for dataset management and support in-house AI models.

As AI teams move from experimentation to production, some limits of data labeling-centered platforms start to surface. Here, we will discuss the common gaps AI teams encounter when annotation is the core offering, especially for long-running, complex AI programs.

Labeling platforms are built to produce annotated datasets efficiently. They are not designed to own the full AI workflow. Data unification, production monitoring, workflow orchestration, and business integration usually live outside the platform. Teams often manage those pieces on their own.

Cost can also become harder to predict over time. Labeling work is typically priced based on data type, volume, and service level. As AI projects evolve and requirements change, forecasting spend for long-running AI initiatives becomes more difficult.

Labeling platforms are optimized for broad coverage across many task types. But projects in areas such as healthcare, contact centers, or complex operations often surface edge cases that require sustained human judgment and domain expertise. High throughput alone doesn’t always solve those problems.

Labeling platforms are usually built for fast startup growth. In regulated or high-risk environments, teams often need clearer controls for data access, work review, and decision documentation over time. Those requirements are not always a core focus of annotation-first platforms.

Not all Scale AI competitors offer the same type of support. Some focus on tooling, others on managed services, and a smaller group operates as end-to-end AI partners. The table below breaks down these categories and how they differ in practice.

Scale AI works well when the primary goal is labeling data efficiently. But as AI programs mature, many teams find that labeling alone is no longer the main constraint. That’s usually the point where alternatives like Invisible may be more helpful.

There are a few clear signals that annotation is no longer the main constraint in your AI program:

Teams working on advanced models often need faster iteration, feedback loops, and control over how data is used, not just more labeled samples.

If the hard part is connecting labeled data to APIs, live systems, dashboards, or KPIs, labeling speed alone won’t solve the problem.

Areas like contact centers, complex operations, or specialist healthcare involve nuance and edge cases that don’t fit clean, standardized labeling workflows.

Below are a few situations where a Scale AI alternative like Invisible is a better fit:

Nuanced NLP, long-tail LLM behavior, and complex domain rules often require human–in-the-loop SMEs, not just general annotators.

Teams focused on outcomes like conversion rates, average handle time, or accuracy in AI-driven agents tend to value partners who stay involved beyond “X million labels delivered.”

While many platforms focus primarily on data labeling, Invisible combines data work, human expertise, AI model training, and operational automation into end-to-end AI systems. Annotation is just one part of the stack, not the core product.

Here’s what differentiates Invisible from Scale AI and its competitors:

Invisible offers a modular platform that supports multiple stages of the AI lifecycle pipeline, from data integration to workflow automation and performance evaluation. Its flexible data infrastructure, Neuron, integrates and transforms structured and unstructured data, while automation layers integrate AI work into real systems and business KPIs.

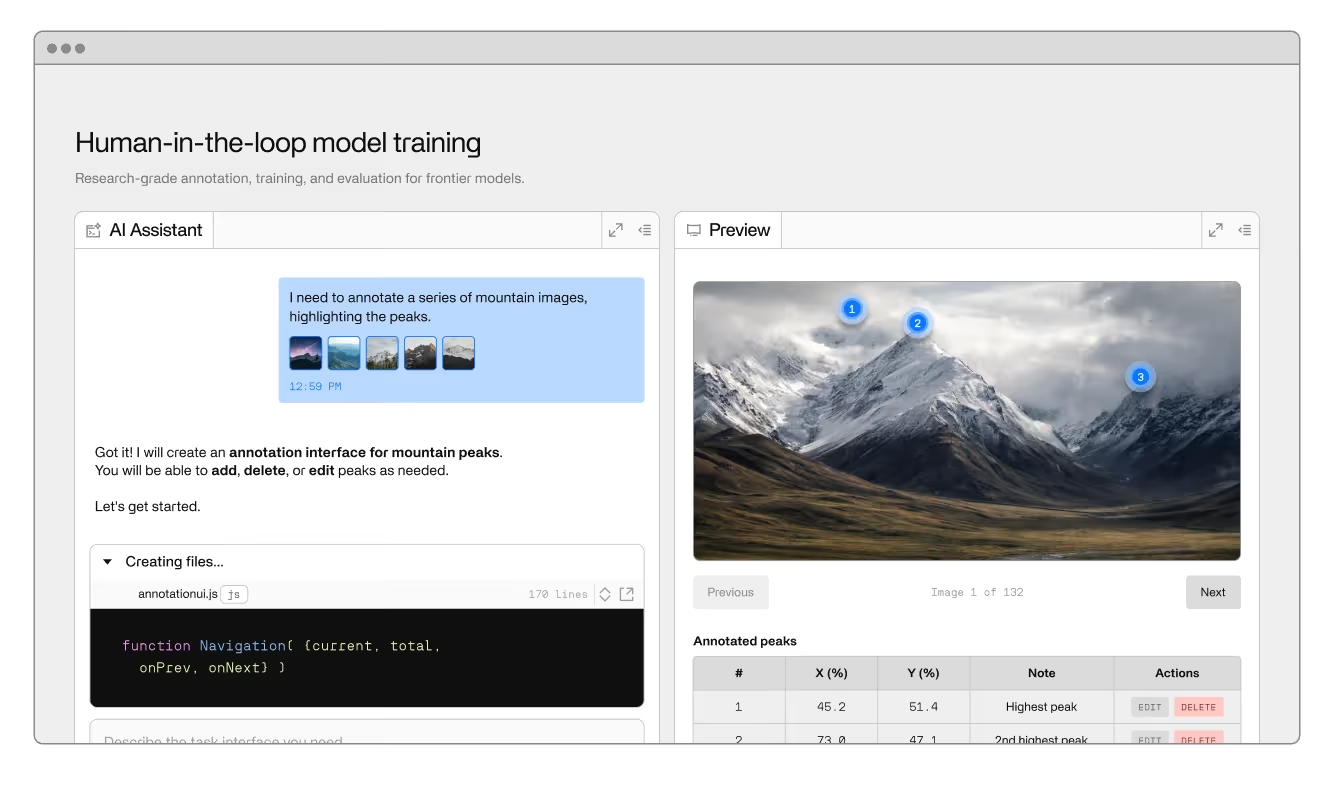

Invisible provides edge-case curation, RLHF, fine-tuning, and expert validation for foundation model and LLM labs. The platform supports domain-specific expert involvement and AI-powered labeling combined with deep human-in-the-loop quality assurance.

Invisible is built to help enterprises deploy AI safely and effectively. It supports governance, data access controls, and change management that align with how large organizations operate. From day one, it can handle complex org structures, multiple providers, hybrid infrastructure, and regulatory requirements.

Here’s a comparison between Scale AI and other competitors to help you make an informed choice.

Here’s where each fits best:

Choosing the right AI partner or platform requires teams to focus on how a solution fits into their actual workflows and production goals.

Clarify the problem you want the solution to solve. For instance, ask if you are trying to improve model performance, enable a new product, or simply generate a dataset. Understanding the real problem will guide the choice of provider or approach.

Evaluate annotation in the context of how it supports training and iteration. This includes how labeled data feeds into fine-tuning loops, LLM training, RLHF, and ongoing model evaluation. If labels cannot be easily integrated into these processes, their value is limited.

Not all data can be handled by generic labeling workforces. Teams should assess whether their use cases require domain expertise, such as clinicians, underwriters, or support quality analysts. They should also determine whether human-in-the-loop review cycles are needed to handle edge cases.

If your team has the resources to manage infrastructure, quality control, and workflows, a platform or open-source tool may suffice. If bandwidth is limited or the use case is complex, an end-to-end partner that provides expertise and operational support may be a better fit.

Evaluate Scale AI competitors based on how they handle your needs:

For teams already using Scale AI or other labeling platforms, working with Invisible usually starts with a refocus rather than a full replacement. The conversation shifts from producing labeled data to improving how data supports real-time model behavior and production outcomes.

For foundation labs and model providers, this typically means scoping work around concrete gaps in training data, edge cases, and evaluation or safety needs. Labeling and RLHF efforts are designed around where models struggle in practice, not around generic benchmarks or volume targets.

For enterprise teams, the work often begins with a review of existing datasets, workflows, and AI applications such as contact centers, vision systems, or operational tools. Invisible teams usually test the approach through a focused pilot on a single use case before expanding further.

In many cases, Invisible runs alongside existing providers at first. Legacy labels are reused where they make sense, while more complex workflows gradually move to Invisible. Over time, the relationship shifts from a labeling vendor to an end-to-end AI and operations partner.

As AI programs mature, the primary challenge often shifts from producing labeled data to ensuring that AI systems operate reliably in real-world production environments.

Invisible can help teams that are facing this exact challenge. It works with frontier and enterprise AI teams to connect data, human expertise, and models into operational systems. Rather than focusing only on annotation, Invisible supports the full path from training data and evaluation through production workflows and measurable outcomes.

See how we work or request a demo to see how Invisible would approach one or two of your highest-impact AI projects differently, especially in contact centers, computer vision, and other high-stakes applications.

Scale AI is known for data labeling and training data services. It handles parts of the ML workflow well, but labeling remains the core of what most teams use it for.

If your main need is labeling, teams often look at Labelbox, Encord, or SuperAnnotate for tool-driven workflows. Appen and similar providers are used for large managed services projects. Some teams also use open-source tools like CVAT or Label Studio if they want to manage everything in-house.

Invisible makes more sense when labeling isn’t the main problem anymore. Teams usually reach that point when they’re dealing with edge cases, RLHF, fine-tuning, or when models need to work reliably in real production workflows.

Yes. Many teams run Scale AI or other labeling platforms alongside Invisible. Existing labeled data can often be reused, while Invisible supports more complex workflows, model evaluation, and production systems. This coexistence allows teams to evolve without replacing everything at once.

Human-in-the-loop and SME involvement are very important for complex or high-risk use cases. Generic labeling works for simple tasks. For nuanced language, domain rules, or edge cases, you usually need people who actually understand the problem and the model’s behavior.

Labeling platforms are mostly priced by volume and task type. End-to-end partners are better compared by outcomes like model performance, production stability, and the amount of internal effort they replace. The “cheapest” option depends on what problem you’re really solving.